运行时报错。

这是我的代码:

import numpy as np

import matplotlib.pyplot as plt

import torch

import torch.nn as nn

import torch.optim as optim

from torch.autograd import Variable

x_train = np.array([[3.3],[4.4],[5.5],[6.71],[6.93],[4.168],[9.779],[6.182],[7.59],[2.167],[7.042],[10.791],[5.313],[7.997],[3.1]], dtype=np.float32)

y_train = np.array([[1.23],[3.24],[2.3],[2.14],[2.93],[3.168],[1.779],[2.182],[2.59],[3.167],[1.042],[3.791],[3.313],[2.997],[1.1]], dtype=np.float32)

x_train = torch.from_numpy(x_train)

y_train = torch.from_numpy(y_train)

class LinearRegression(nn.Module):

def __init__(self):

super(LinearRegression,self).__init__()

self.linear = nn.Linear(1,1)

def forward(self,x):

out = self.linear(x)

return out

if torch.cuda.is_available():

model = LinearRegression().cuda()

else:

model = LinearRegression()

criterion = nn.MSELoss()

optimizer = optim.SGD(model.parameters(),lr = 1e-3)

num_epoch = 100

for epcoh in range(num_epoch):

if torch.cuda.is_available():

inputs = Variable(x_train).cuda()

outputs = Variable(y_train).cuda()

else:

inputs = Variable(x_train)

outputs = Variable(y_train)

out = model(inputs)

target = y_train

loss = criterion(out,target)

optimizer.zero_grad()

loss.backward()

optimizer.step()

if (epcoh+1)%20 == 0:

print('Epoch[{}/{}],loss:{:.6f}'

.format((epcoh+1,num_epoch,loss.data[0])))

model.eval()

predict = model(Variable(x_train))

predict = predict.data.numpy()

plt.plot(x_train.numpy(),y_train(),'ro',label = 'Original data')

plt.plot(x_train.numpy(),predict,label = 'Fitting Line')

plt.show()

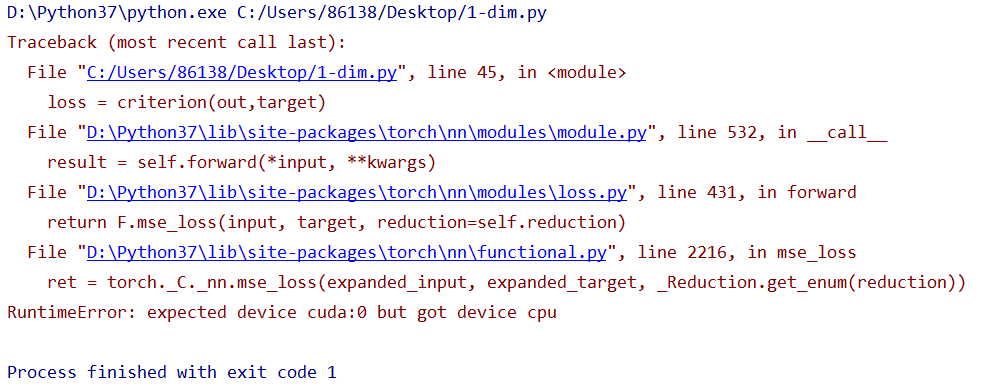

错误截图:

各位大佬们,这个错误是怎么回事啊,是cpu的问题吗?以下是cpu的信息截图:

torch.cuda.current_device(): 0

torch.cuda.device(0):

torch.cuda.device_count(): 1

torch.cuda.get_device_name(0): GeForce MX250

torch.cuda.is_available(): True