求大佬指点:spark streaming无法对接kafka;

1. 程序中spark streaming可以消费子系统(ubuntu)上netcat发送的数据,

1. kafka自己的消费者可以消费自己生产的数据,

1. windows10上程序代码如下:

val kafkaParam = Map(

"bootstrap.servers" -> "localhost:9092",

"key.deserializer" -> classOf[StringDeserializer],

"value.deserializer" -> classOf[StringDeserializer],

"group.id" -> "recommender",

"auto.offset.reset" -> "latest"

)

// 创建一个DStream

val kafkaStream = KafkaUtils.createDirectStream[String, String](ssc,

LocationStrategies.PreferConsistent,

ConsumerStrategies.Subscribe[String, String](Array(config("kafka.topic")), kafkaParam)

)

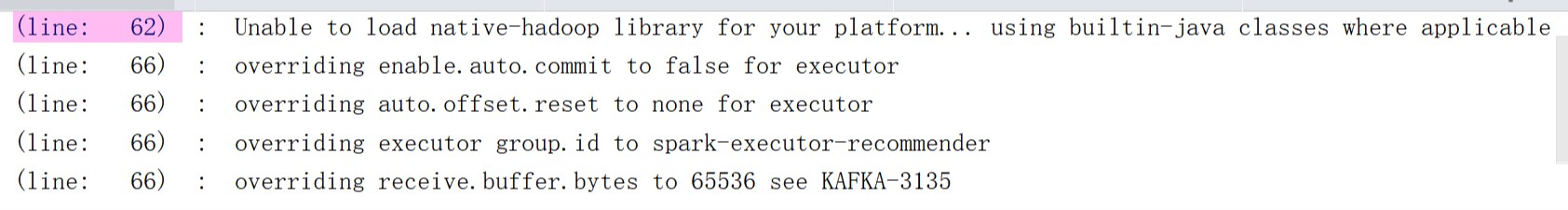

控制台warn:

Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

overriding enable.auto.commit to false for executor

overriding auto.offset.reset to none for executor

overriding executor group.id to spark-executor-recommender

overriding receive.buffer.bytes to 65536 see KAFKA-3135

然后ssc.start()一直无反应,debug跟进,

发现卡在StreamingContext.scala里的 ThreadUtils.runInNewThread("streaming-start")这里,

再跟进 ThreadUtils.scala 里面

thread.setDaemon(isDaemon)

thread.start()

thread.join()

发现最终卡在这里:thread.join(),无报错信息

注:程序启动前已经在子系统(ubuntu)上已经启动了kafka