小生最近在windows上搭建hadoop,按照官网的教程,应该是搭建起来了(单节点):可以创建和删除hdfs上的文件,在eclipse里面也可以看到hdfs,如果我添加文件,eclipse里也会跟着更新,所以我觉得应该是搭建起来了,如果不是求大神指导。

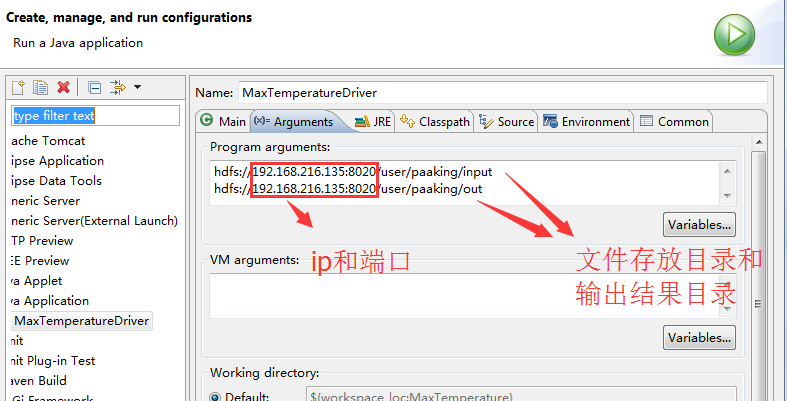

问题是:在eclipse里我编写了wordcount代码(应该不会错的,我照着书上编的,是0.20.0版),然后使用run configurations设置Arguments来运行(hdfs://English.txt hdfs://test/)但是失败了,报错如下:

log4j:WARN No appenders could be found for logger (org.apache.hadoop.metrics2.lib.MutableMetricsFactory).

log4j:WARN Please initialize the log4j system properly.

log4j:WARN See http://logging.apache.org/log4j/1.2/faq.html#noconfig for more info.

Exception in thread "main" java.lang.IllegalArgumentException: java.net.UnknownHostException: English.txt

at org.apache.hadoop.security.SecurityUtil.buildTokenService(SecurityUtil.java:373)

at org.apache.hadoop.hdfs.NameNodeProxies.createNonHAProxy(NameNodeProxies.java:258)

at org.apache.hadoop.hdfs.NameNodeProxies.createProxy(NameNodeProxies.java:153)

at org.apache.hadoop.hdfs.DFSClient.(DFSClient.java:602)

at org.apache.hadoop.hdfs.DFSClient.(DFSClient.java:547)

at org.apache.hadoop.hdfs.DistributedFileSystem.initialize(DistributedFileSystem.java:139)

at org.apache.hadoop.fs.FileSystem.createFileSystem(FileSystem.java:2591)

at org.apache.hadoop.fs.FileSystem.access$200(FileSystem.java:89)

at org.apache.hadoop.fs.FileSystem$Cache.getInternal(FileSystem.java:2625)

at org.apache.hadoop.fs.FileSystem$Cache.get(FileSystem.java:2607)

at org.apache.hadoop.fs.FileSystem.get(FileSystem.java:368)

at org.apache.hadoop.fs.Path.getFileSystem(Path.java:296)

at org.apache.hadoop.mapreduce.lib.input.FileInputFormat.addInputPath(FileInputFormat.java:518)

at wordcount.main(wordcount.java:65)

Caused by: java.net.UnknownHostException: English.txt

... 14 more

求大神指教一二,帮小弟摆脱这个奇怪的问题!