server环境:

在virtualbox里安装了ubuntu,然后装了了hadoop2.7.5,hbase1.3.1,然后hadoop和hbase的环境都配置好了

启动了hbase,然后本机里通过scala访问虚拟机里的hbase时,connection能获取到,但是在执行tableExists时,卡一会后就报超时了,client的代码和server的配置如:

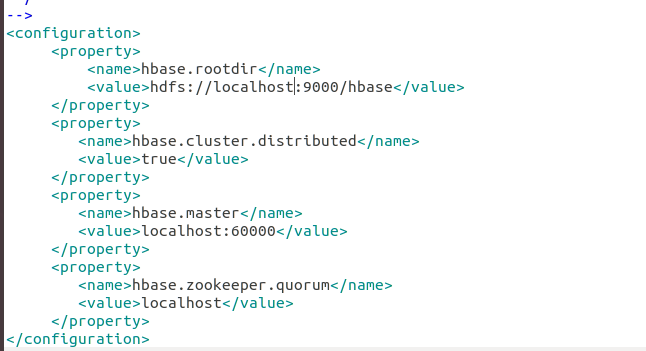

server配置(其中zookeeper用的hbase自己管理的):

hbase-site.xml

client的代码:

class HbaseUtil {

def GetHbaseConfiguration(ip:String):Unit={

var conf = HBaseConfiguration.create;

//这个是远程hbase的ip地址

conf.set("hbase.zookeeper.quorum",ip)

//conf.set("zookeeper.znode.parent", "/hbase-unsecure")

//2181是hbase里zookeeper的默认端口号

conf.set("hbase.zookeeper.property.clientPort","2181")

println(ip+":habse connention success...")

val tableName = "jndata"

conf.set(TableInputFormat.INPUT_TABLE, tableName)

val con= ConnectionFactory.createConnection(conf)

val hBaseAdmin = con.getAdmin//new HBaseAdmin(conf);

val tn = TableName.valueOf(tableName);

// hBaseAdmin.disableTable(tn)

// println(tableName + " is exist,detele....")

//创建表

if (hBaseAdmin.tableExists(tn)) {

println(tableName + " is exist,....")

}else{

println(tableName + " is not exist,....")

}

}

}

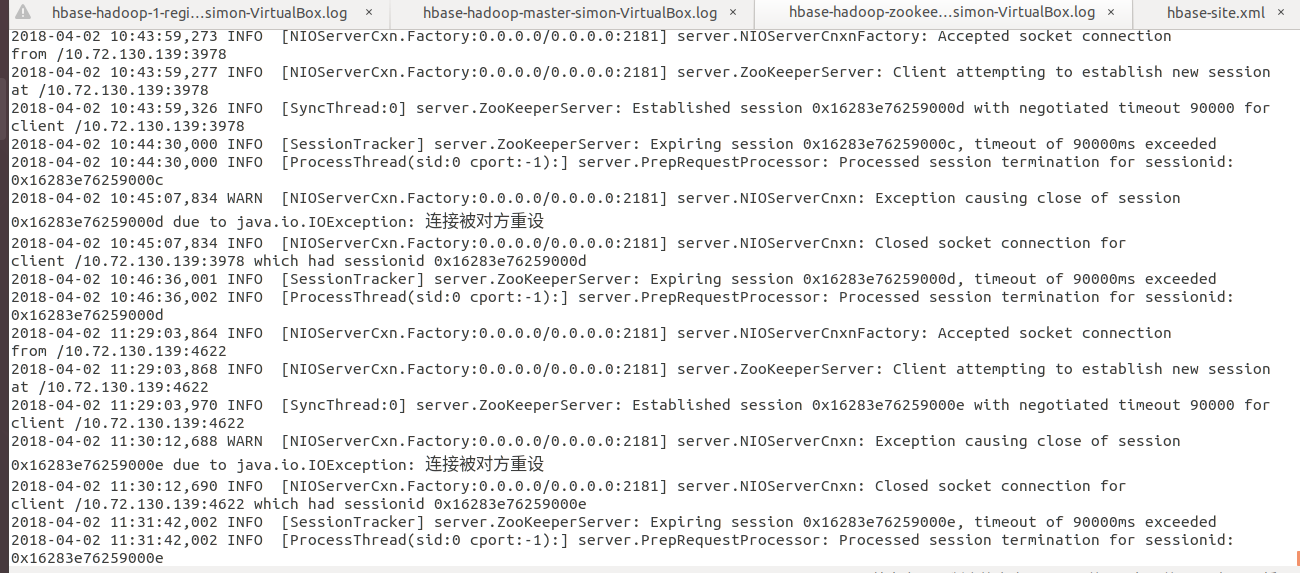

然后server端的zookeeper的log如下:

client端的异常如下:

Exception in thread "main" org.apache.hadoop.hbase.client.RetriesExhaustedException: Failed after attempts=36, exceptions:

Mon Apr 02 11:30:11 CST 2018, null, java.net.SocketTimeoutException: callTimeout=60000, callDuration=76915: Connection refused: no further information row 'jndata,,' on table 'hbase:meta' at region=hbase:meta,,1.1588230740, hostname=simon-virtualbox,16201,1522631406769, seqNum=0

at org.apache.hadoop.hbase.client.RpcRetryingCallerWithReadReplicas.throwEnrichedException(RpcRetryingCallerWithReadReplicas.java:276)

at org.apache.hadoop.hbase.client.ScannerCallableWithReplicas.call(ScannerCallableWithReplicas.java:210)

at org.apache.hadoop.hbase.client.ScannerCallableWithReplicas.call(ScannerCallableWithReplicas.java:60)

at org.apache.hadoop.hbase.client.RpcRetryingCaller.callWithoutRetries(RpcRetryingCaller.java:212)

at org.apache.hadoop.hbase.client.ClientScanner.call(ClientScanner.java:314)

at org.apache.hadoop.hbase.client.ClientScanner.nextScanner(ClientScanner.java:289)

at org.apache.hadoop.hbase.client.ClientScanner.initializeScannerInConstruction(ClientScanner.java:164)

at org.apache.hadoop.hbase.client.ClientScanner.<init>(ClientScanner.java:159)

at org.apache.hadoop.hbase.client.HTable.getScanner(HTable.java:796)

at org.apache.hadoop.hbase.MetaTableAccessor.fullScan(MetaTableAccessor.java:602)

at org.apache.hadoop.hbase.MetaTableAccessor.tableExists(MetaTableAccessor.java:366)

at org.apache.hadoop.hbase.client.HBaseAdmin.tableExists(HBaseAdmin.java:408)

at rxb.flinkDemo.hbase.HbaseUtil.GetHbaseConfiguration(HbaseUtil.scala:42)

at rxb.flinkDemo.MyDemo$.main(FlinkDemo.scala:27)

at rxb.flinkDemo.MyDemo.main(FlinkDemo.scala)

Caused by: java.net.SocketTimeoutException: callTimeout=60000, callDuration=76915: Connection refused: no further information row 'jndata,,' on table 'hbase:meta' at region=hbase:meta,,1.1588230740, hostname=simon-virtualbox,16201,1522631406769, seqNum=0

at org.apache.hadoop.hbase.client.RpcRetryingCaller.callWithRetries(RpcRetryingCaller.java:171)

at org.apache.hadoop.hbase.client.ResultBoundedCompletionService$QueueingFuture.run(ResultBoundedCompletionService.java:65)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

at java.lang.Thread.run(Thread.java:748)

Caused by: java.net.ConnectException: Connection refused: no further information

at sun.nio.ch.SocketChannelImpl.checkConnect(Native Method)

at sun.nio.ch.SocketChannelImpl.finishConnect(SocketChannelImpl.java:717)

at org.apache.hadoop.net.SocketIOWithTimeout.connect(SocketIOWithTimeout.java:206)

at org.apache.hadoop.net.NetUtils.connect(NetUtils.java:531)

at org.apache.hadoop.net.NetUtils.connect(NetUtils.java:495)

at org.apache.hadoop.hbase.ipc.RpcClientImpl$Connection.setupConnection(RpcClientImpl.java:416)

at org.apache.hadoop.hbase.ipc.RpcClientImpl$Connection.setupIOstreams(RpcClientImpl.java:722)

at org.apache.hadoop.hbase.ipc.RpcClientImpl$Connection.writeRequest(RpcClientImpl.java:909)

at org.apache.hadoop.hbase.ipc.RpcClientImpl$Connection.tracedWriteRequest(RpcClientImpl.java:873)

at org.apache.hadoop.hbase.ipc.RpcClientImpl.call(RpcClientImpl.java:1244)

at org.apache.hadoop.hbase.ipc.AbstractRpcClient.callBlockingMethod(AbstractRpcClient.java:227)

at org.apache.hadoop.hbase.ipc.AbstractRpcClient$BlockingRpcChannelImplementation.callBlockingMethod(AbstractRpcClient.java:336)

at org.apache.hadoop.hbase.protobuf.generated.ClientProtos$ClientService$BlockingStub.scan(ClientProtos.java:35396)

at org.apache.hadoop.hbase.client.ScannerCallable.openScanner(ScannerCallable.java:404)

at org.apache.hadoop.hbase.client.ScannerCallable.call(ScannerCallable.java:211)

at org.apache.hadoop.hbase.client.ScannerCallable.call(ScannerCallable.java:65)

at org.apache.hadoop.hbase.client.RpcRetryingCaller.callWithoutRetries(RpcRetryingCaller.java:212)

at org.apache.hadoop.hbase.client.ScannerCallableWithReplicas$RetryingRPC.call(ScannerCallableWithReplicas.java:364)

at org.apache.hadoop.hbase.client.ScannerCallableWithReplicas$RetryingRPC.call(ScannerCallableWithReplicas.java:338)

at org.apache.hadoop.hbase.client.RpcRetryingCaller.callWithRetries(RpcRetryingCaller.java:137)

... 4 more

好多办法都试过了还是不行:

1.server和client的防火墙都关了

2.client的host里也配置了server的ip和hostname