hadoop-2.7.7

centos7

ant 1.9.14

biuld-contrib.xml代码如下

<?xml version="1.0"?>

<!-- Load all the default properties, and any the user wants -->

<!-- to contribute (without having to type -D or edit this file -->

<!-- Property added for contrib system tests -->

<!-- Property added for contrib system tests -->

property="test.system.available"/>

<!-- all jars together -->

value="http://java.sun.com/j2se/1.4/docs/api/"/>

<!-- Property added for contrib system tests -->

location="${build.test.system}/classes"/>

<!-- IVY properties set here -->

<!-- loglevel take values like default|download-only|quiet -->

value="http://repo2.maven.org/maven2/org/apache/ivy/ivy/${ivy.version}/ivy-${ivy.version}.jar" />

<!--this is the naming policy for artifacts we want pulled down-->

value="${ant.project.name}/[conf]/[artifact]-[revision].[ext]"/>

<!-- the normal classpath -->

<!-- the unit test classpath -->

<!-- The system test classpath -->

<!-- to be overridden by sub-projects -->

<!-- ====================================================== -->

<!-- Stuff needed by all targets -->

<!-- ====================================================== -->

<!-- The below two tags added for contrib system tests -->

<!-- ====================================================== -->

<!-- Compile a Hadoop contrib's files -->

<!-- ====================================================== -->

encoding="${build.encoding}"

srcdir="${src.dir}"

includes="**/*.java"

destdir="${build.classes}"

debug="${javac.debug}"

deprecation="${javac.deprecation}">

<!-- ======================================================= -->

<!-- Compile a Hadoop contrib's example files (if available) -->

<!-- ======================================================= -->

encoding="${build.encoding}"

srcdir="${src.examples}"

includes="**/*.java"

destdir="${build.examples}"

debug="${javac.debug}">

<!-- ================================================================== -->

<!-- Compile test code -->

<!-- ================================================================== -->

encoding="${build.encoding}"

srcdir="${src.test}"

includes="**/*.java"

excludes="system/**/*.java"

destdir="${build.test}"

debug="${javac.debug}">

<!-- ================================================================== -->

<!-- Compile system test code -->

<!-- ================================================================== -->

if="test.system.available">

encoding="${build.encoding}"

srcdir="${src.test.system}"

includes="**/*.java"

destdir="${build.system.classes}"

debug="${javac.debug}">

<!-- ====================================================== -->

<!-- Make a Hadoop contrib's jar -->

<!-- ====================================================== -->

jarfile="${build.dir}/hadoop-${name}-${version}.jar"

basedir="${build.classes}"

/>

<!-- ====================================================== -->

<!-- Make a Hadoop contrib's examples jar -->

<!-- ====================================================== -->

if="examples.available" unless="skip.contrib">

<!-- ====================================================== -->

<!-- Package a Hadoop contrib -->

<!-- ====================================================== -->

<!-- ================================================================== -->

<!-- Run unit tests -->

<!-- ================================================================== -->

printsummary="yes" showoutput="${test.output}"

haltonfailure="no" fork="yes" maxmemory="512m"

errorProperty="tests.failed" failureProperty="tests.failed"

timeout="${test.timeout}">

<sysproperty key="test.build.data" value="${build.test}/data"/>

<sysproperty key="build.test" value="${build.test}"/>

<sysproperty key="src.test.data" value="${src.test.data}"/>

<sysproperty key="contrib.name" value="${name}"/>

<!-- requires fork=yes for:

relative File paths to use the specified user.dir

classpath to use build/contrib/*.jar

-->

<sysproperty key="user.dir" value="${build.test}/data"/>

<sysproperty key="fs.default.name" value="${fs.default.name}"/>

<sysproperty key="hadoop.test.localoutputfile" value="${hadoop.test.localoutputfile}"/>

<sysproperty key="hadoop.log.dir" value="${hadoop.log.dir}"/>

<sysproperty key="taskcontroller-path" value="${taskcontroller-path}"/>

<sysproperty key="taskcontroller-ugi" value="${taskcontroller-ugi}"/>

<classpath refid="test.classpath"/>

<formatter type="${test.junit.output.format}" />

<batchtest todir="${build.test}" unless="testcase">

<fileset dir="${src.test}"

includes="**/Test*.java" excludes="**/${test.exclude}.java, system/**/*.java" />

</batchtest>

<batchtest todir="${build.test}" if="testcase">

<fileset dir="${src.test}" includes="**/${testcase}.java" excludes="system/**/*.java" />

</batchtest>

</junit>

<antcall target="checkfailure"/>

<!-- ================================================================== -->

<!-- Run system tests -->

<!-- ================================================================== -->

if="test.system.available">

outputproperty="nonspace.os">

value="${nonspace.os}-${os.arch}-${sun.arch.data.model}"/>

value="${hadoop.root}/build/native/${build.platform}"/>

value="${hadoop.root}/build/c++-examples/${build.platform}"/>

classpath="test.system.classpath"

test.dir="${build.test.system}"

fileset.dir="${hadoop.root}/src/contrib/${name}/src/test/system"

hadoop.conf.dir.deployed="${hadoop.conf.dir.deployed}">

todir="@{test.dir}/extraconf" />

todir="@{test.dir}/extraconf" />

printsummary="${test.junit.printsummary}"

haltonfailure="${test.junit.haltonfailure}"

fork="yes"

forkmode="${test.junit.fork.mode}"

maxmemory="${test.junit.maxmemory}"

dir="${basedir}" timeout="${test.timeout}"

errorProperty="tests.failed" failureProperty="tests.failed">

value="${build.native}/lib:${lib.dir}/native/${build.platform}"/>

<!-- set compile.c++ in the child jvm only if it is set -->

<!-- Pass probability specifications to the spawn JVM -->

value="@{hadoop.conf.dir.deployed}" />

excludes="**/${test.exclude}.java aop/** system/**">

Contrib Tests failed!

<!-- ================================================================== -->

<!-- Clean. Delete the build files, and their directories -->

<!-- ================================================================== -->

<!--target name="ivy-init-antlib" depends="ivy-download,ivy-probe-antlib" unless="ivy.found"-->

loaderRef="ivyLoader">

You need Apache Ivy 2.0 or later from http://ant.apache.org/

It could not be loaded from ${ivy_repo_url}

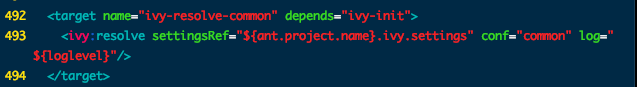

description="Retrieve Ivy-managed artifacts for the compile/test configurations">

pattern="${build.ivy.lib.dir}/${ivy.artifact.retrieve.pattern}" sync="true" log="${loglevel}"/>

build.xml 代码如下

<?xml version="1.0" encoding="UTF-8" standalone="no"?>

<include name="org.eclipse.team.cvs.ssh2*.jar"/>

<include name="com.jcraft.jsch*.jar"/>

</fileset>

<!-- Override classpath to include Eclipse SDK jars -->

<!--pathelement location="${hadoop.root}/build/classes"/-->

<!-- Skip building if eclipse.home is unset. -->

encoding="${build.encoding}"

srcdir="${src.dir}"

includes="**/*.java"

destdir="${build.classes}"

debug="${javac.debug}"

deprecation="${javac.deprecation}">

<!-- Override jar target to specify manifest -->

<copy todir="${build.dir}/classes" verbose="true">

<fileset dir="${root}/src/java">

<include name="*.xml"/>

</fileset>

</copy>

<copy file="${hadoop.home}/share/hadoop/common/lib/protobuf-java-${protobuf.version}.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/log4j-${log4j.version}.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/commons-cli-${commons-cli.version}.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/commons-configuration-${commons-configuration.version}.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/commons-lang-${commons-lang.version}.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/commons-collections-${commons-collections.version}.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/jackson-core-asl-${jackson.version}.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/jackson-mapper-asl-${jackson.version}.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/slf4j-log4j12-${slf4j-log4j12.version}.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/slf4j-api-${slf4j-api.version}.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/guava-${guava.version}.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/hadoop-auth-${hadoop.version}.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/commons-cli-${commons-cli.version}.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/netty-${netty.version}.jar" todir="${build.dir}/lib" verbose="true"/>

<copy file="${hadoop.home}/share/hadoop/common/lib/htrace-core-${htrace.version}.jar" todir="${build.dir}/lib" verbose="true"/>

<jar

jarfile="${build.dir}/hadoop-${name}-${hadoop.version}.jar"

manifest="${root}/META-INF/MANIFEST.MF">

<manifest>

value="classes/,

lib/hadoop-mapreduce-client-core-${hadoop.version}.jar,

lib/hadoop-mapreduce-client-common-${hadoop.version}.jar,

lib/hadoop-mapreduce-client-jobclient-${hadoop.version}.jar,

lib/hadoop-auth-${hadoop.version}.jar,

lib/hadoop-common-${hadoop.version}.jar,

lib/hadoop-hdfs-${hadoop.version}.jar,

lib/protobuf-java-${protobuf.version}.jar,

lib/log4j-${log4j.version}.jar,

lib/commons-cli-${commons-cli.version}.jar,

lib/commons-configuration-${commons-configuration.version}.jar,

lib/commons-httpclient-${commons-httpclient.version}.jar,

lib/commons-lang-${commons-lang.version}.jar,

lib/commons-collections-${commons-collections.version}.jar,

lib/jackson-core-asl-${jackson.version}.jar,

lib/jackson-mapper-asl-${jackson.version}.jar,

lib/slf4j-log4j12-${slf4j-log4j12.version}.jar,

lib/slf4j-api-${slf4j-api.version}.jar,

lib/guava-${guava.version}.jar,

lib/netty-${netty.version}.jar,

lib/htrace-core-${htrace.version}.jar

lib/servlet-api-${servlet-api.version}.jar,

lib/commons-io-${commons-io.version}.jar,

lib/htrace-core-${htrace.version}-incubating.jar"/>

<!--fileset dir="${build.dir}" includes="*.xml"/-->

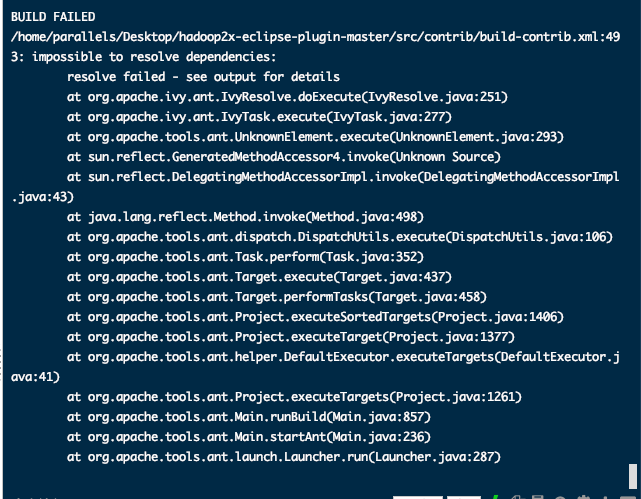

各位大佬帮我看看这到底是什么原因导致 第493行代码解析不出来 很急