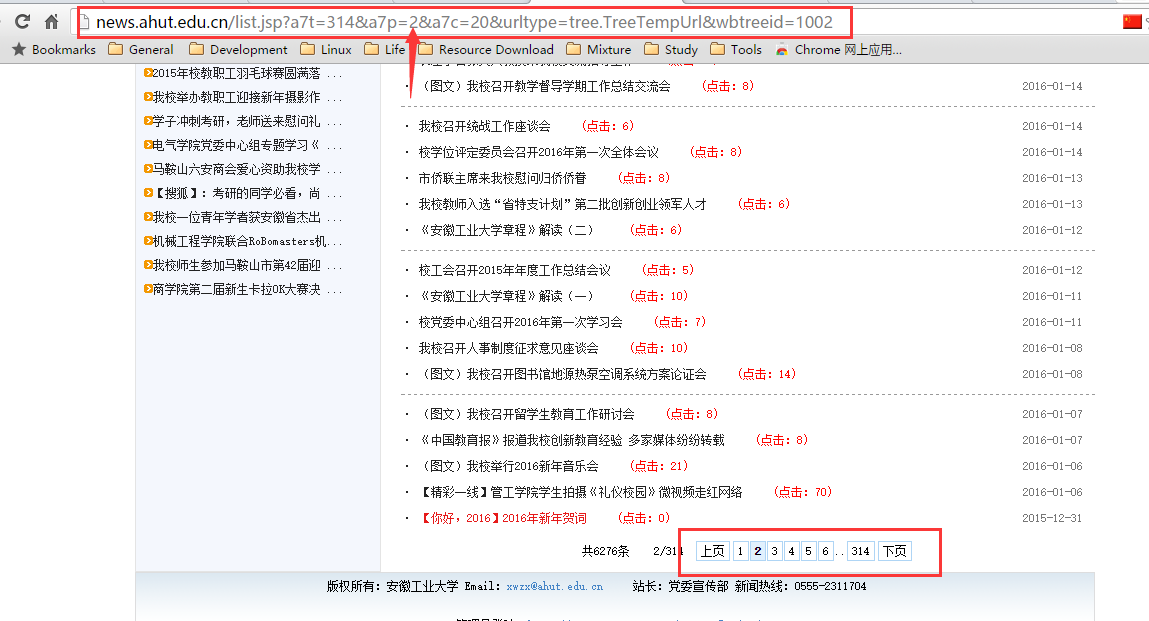

我是刚刚开始学习爬虫,模仿网上一个例子自己写了一个,想循环抓取所有页面新闻标题和链接,但是只能抓取到起始页面的。

这是抓取的起始页面

从下面可以看到列表有很多,我想抓取所有的新闻条目,每一页的地址仅一个数字不同

spider文件夹下的关键代码如下所示

# -*- coding:utf-8 -*-

from scrapy.spiders import Spider

from scrapy.selector import Selector

from ahutNews.items import AhutnewsItem

from scrapy.spiders import Rule

from scrapy.linkextractors import LinkExtractor

class AhutNewsSpider(Spider):

name = 'ahutnews'

allowed_domains="ahut.edu.cn"

start_urls=['http://news.ahut.edu.cn/list.jsp?a7t=314&a7p=2&a7c=20&urltype=tree.TreeTempUrl&wbtreeid=1002']

rules=(

Rule(LinkExtractor(allow=r"/list.jsp\?a7t=314&a7p=*"),

callback="parse",follow=True),

)

def parse(self, response):

hxs = Selector(response)

titles = hxs.xpath('//tr[@height="26"]')

items = []

for data in titles:

item = AhutnewsItem()

title=data.xpath('td[1]/a/@title').extract()

link=data.xpath('td[1]/a/@href').extract()

item['title'] = [t.encode('utf-8') for t in title]

item['link'] = "news.ahut.edu.cn" + [l.encode('utf-8') for l in link][0]

items.append(item)

return items