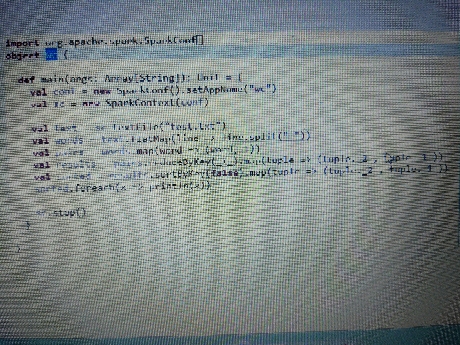

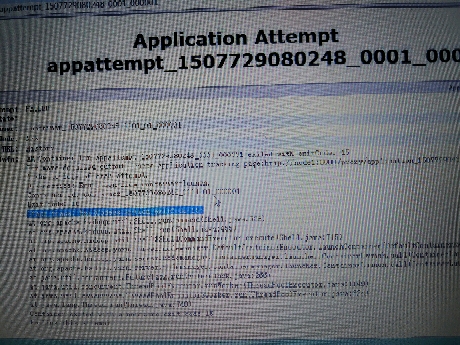

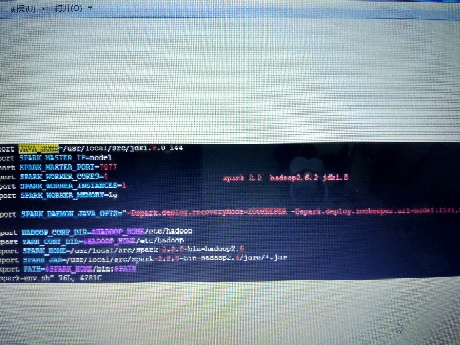

这是一个仿照网上例子,自己学习测试的。用scala编写写了一个wordCount的例子,在myEclipse上是可以运行的,并可以得出结果。现在将例子导出jar包,然后放到hadoop集群上运行,出现如下错误:Stack trace: ExitCodeException exitCode=15跪求各路大神帮忙, 这个问题已经困扰我一个星期了,网上也找了很久,没找到解决办法。没有多少分了 。。。非常感谢!!!环境: hadoop2.6.2 spark2.2 jdk1.8 scala2.2hadoop集群应该是没有问题的,浏览器可以打开50070的页面下面是spark on yarn的环境:export JAVA_HOME=/usr/local/src/jdk1.8.0_144export SPARK_MASTER_IP=node1export SPARK_MASTER_PORT=7077export SPARK_WORKER_CORES=1export SPARK_WORKER_INSTANCES=1export SPARK_WORKER_MEMORY=1gexport SPARK_DAEMON_JAVA_OPTS="-Dspark.deploy.recoveryMode=ZOOKEEPER -Dspark.deploy.zookeeper.url=node1:2181,node2:2181,node3:2181"export HADOOP_CONF_DIR=$HADOOP_HOME/etc/hadoopexport YARN_CONF_DIR=$HADOOP_HOME/etc/hadoopexport SPARK_HOME=/usr/local/src/spark-2.2.0-bin-hadoop2.6export SPARK_JAR=/usr/local/src/spark-2.2.0-bin-hadoop2.6/jars/*.jarexport PATH=$SPARK_HOME/bin:$PATHWordCount例子:object wc { def main(args: Array[String]): Unit = { val conf = new SparkConf().setAppName("wc") val sc = new SparkContext(conf) val text = sc.textFile("test.txt") val words = text.flatMap(line => line.split(" ")) val pairs = words.map(word => (word, 1)) val results = pairs.reduceByKey(_+_).map(tuple => (tuple._2 , tuple._1 )) val sorted = results.sortByKey(false).map(tuple => (tuple._2 , tuple._1 )) sorted.foreach(x => println(x)) sc.stop() }}错误信息:Application Attempt State: FAILEDAM Container: container_1507729080248_0001_01_000001Node: N/ATracking URL: HistoryDiagnostics Info: AM Container for appattempt_1507729080248_0001_000001 exited with exitCode: 15For more detailed output, check application tracking page:http://node1:8088/proxy/application_1507729080248_0001/Then, click on links to logs of each attempt.Diagnostics: Exception from container-launch.Container id: container_1507729080248_0001_01_000001Exit code: 15Stack trace: ExitCodeException exitCode=15:at org.apache.hadoop.util.Shell.runCommand(Shell.java:538)at org.apache.hadoop.util.Shell.run(Shell.java:455)at org.apache.hadoop.util.Shell$ShellCommandExecutor.execute(Shell.java:715)at org.apache.hadoop.yarn.server.nodemanager.DefaultContainerExecutor.launchContainer(DefaultContainerExecutor.java:211)at org.apache.hadoop.yarn.server.nodemanager.containermanager.launcher.ContainerLaunch.call(ContainerLaunch.java:302)at org.apache.hadoop.yarn.server.nodemanager.containermanager.launcher.ContainerLaunch.call(ContainerLaunch.java:82)at java.util.concurrent.FutureTask.run(FutureTask.java:266)at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)at java.lang.Thread.run(Thread.java:748)Container exited with a non-zero exit code 15Failing this attempt

spark集群运行错误:15

- 写回答

- 好问题 0 提建议

- 追加酬金

- 关注问题

- 邀请回答

-

1条回答 默认 最新

悬赏问题

- ¥15 执行 virtuoso 命令后,界面没有,cadence 启动不起来

- ¥50 comfyui下连接animatediff节点生成视频质量非常差的原因

- ¥20 有关区间dp的问题求解

- ¥15 多电路系统共用电源的串扰问题

- ¥15 slam rangenet++配置

- ¥15 有没有研究水声通信方面的帮我改俩matlab代码

- ¥15 ubuntu子系统密码忘记

- ¥15 保护模式-系统加载-段寄存器

- ¥15 电脑桌面设定一个区域禁止鼠标操作

- ¥15 求NPF226060磁芯的详细资料