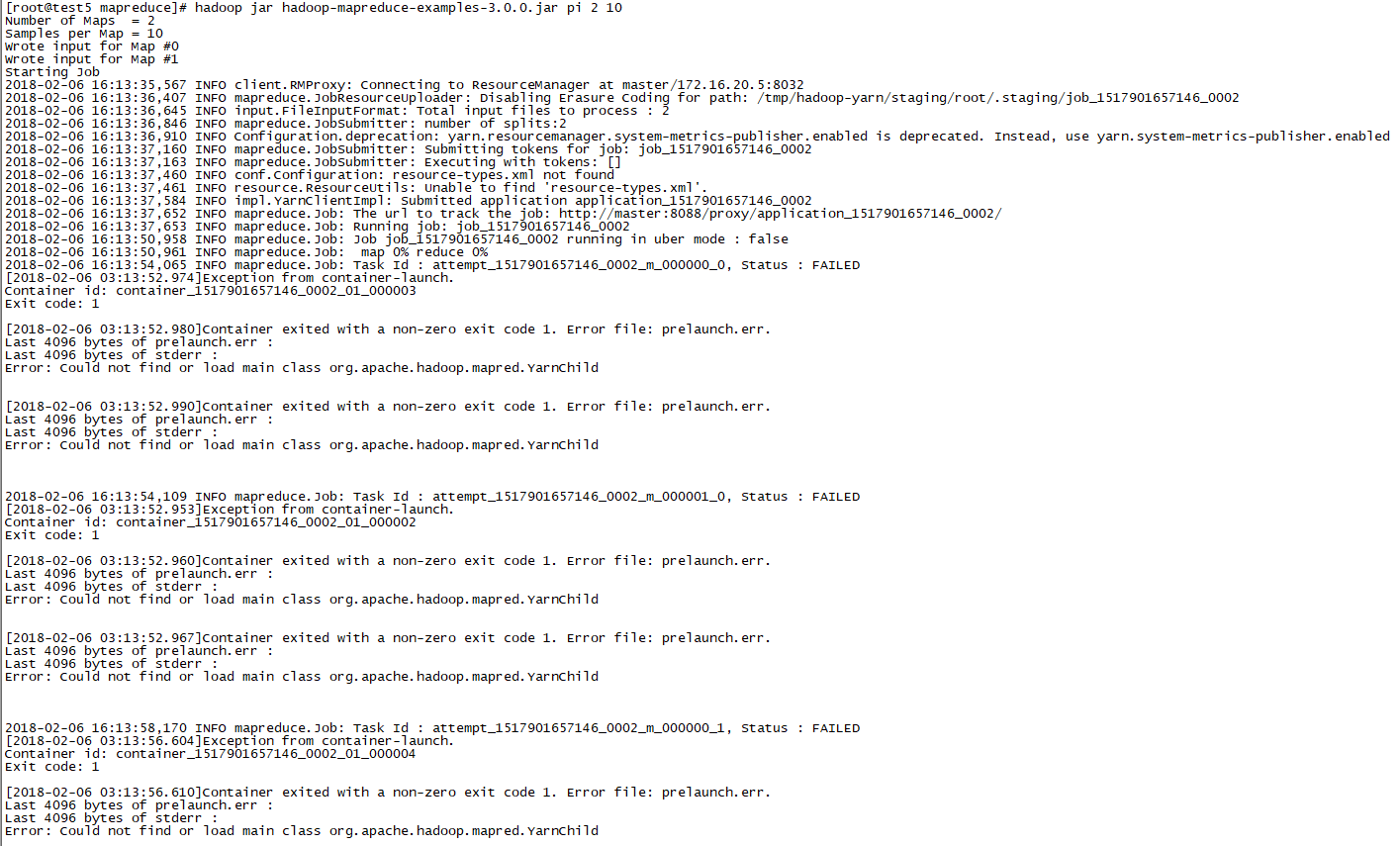

Could not find or load main class org.apache.hadoop.mapred.YarnChild

在使用hadoop3.0.0中,所有东西都配完,也启动起来了。

但运行hadoop-mapreduce-examples-3.0.0.jar的时候开始报错(运行自己的程序也是)

下面是我的配置信息

<!-- core-site.xml -->

<property>

<name>fs.defaultFS</name>

<value>hdfs://master:9000</value>

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>/tmp/hadoopdata</value>

</property>

<!-- hdfs-site.xml -->

<property>

<name>dfs.replication</name>

<value>3</value>

</property>

<!-- yarn-site.xml -->

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.resourcemanager.hostname</name>

<value>master</value>

</property>

<!-- mapred-site.xml -->

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<property>

<name>yarn.app.mapreduce.am.env</name>

<value>HADOOP_MAPRED_HOME=$HADOOP_COMMON_HOME</value>

</property>

export HADOOP_HOME=/data/hadoop-3.0.0

export PATH=$HADOOP_HOME/bin:$HADOOP_HOME/sbin:$PATH

没有配置HADOOP_CLASSPATH。

百度、Google、stackover挺久都找不到答案,希望大神们能解答~