textFile=sc.textFile("file:///home/hduser/pythonwork/ipynotebook/data/test.txt")

stringRDD=textFile.flatMap(lambda line:line.split(' '))

stringRDD.collect()

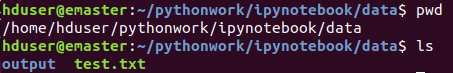

我此路径下是有test文件的:

错误是:

Py4JJavaError: An error occurred while calling z:org.apache.spark.api.python.PythonRDD.collectAndServe.

: org.apache.spark.SparkException: Job aborted due to stage failure: Task 1 in stage 8.0 failed 4 times, most recent failure: Lost task 1.3 in stage 8.0 (TID 58, 192.168.56.103, executor 1): java.io.FileNotFoundException: File file:/home/hduser/pythonwork/ipynotebook/data/test.txt does not exist

。

。

。

Driver stacktrace:

at org.apache.spark.scheduler.DAGScheduler.org$apache$spark$scheduler$DAGScheduler$$failJobAndIndependentStages(DAGScheduler.scala:1599)

。

。

。

Caused by: java.io.FileNotFoundException: File file:/home/hduser/pythonwork/ipynotebook/data/test.txt does not exist

而且发现若我把代码中test.txt随便改一个名字,比如ttest.txt(肯定是没有的文件)

错误竟然发生了变化:

Py4JJavaError: An error occurred while calling z:org.apache.spark.api.python.PythonRDD.collectAndServe.

: org.apache.hadoop.mapred.InvalidInputException: Input path does not exist: file:/home/hduser/pythonwork/ipynotebook/data/tesst.txt

at org.apache.hadoop.mapred.FileInputFormat.singleThreadedListStatus(FileInputFormat.java:287)

at org.apache.hadoop.mapred.FileInputFormat.listStatus(FileInputFormat.java:229)

at org.apache.hadoop.mapred.FileInputFormat.getSplits(FileInputFormat.java:315)

at org.apache.spark.rdd.HadoopRDD.getPartitions(HadoopRDD.scala:200)

at org.apache.spark.rdd.RDD$$anonfun$partitions$2.apply(RDD.scala:253)

at org.apache.spark.rdd.RDD$$anonfun$partitions$2.apply(RDD.scala:251)

at scala.Option.getOrElse(Option.scala:121)

at org.apache.spark.rdd.RDD.partitions(RDD.scala:251)

at org.apache.spark.rdd.MapPartitionsRDD.getPartitions(MapPartitionsRDD.scala:35)

at org.apache.spark.rdd.RDD$$anonfun$partitions$2.apply(RDD.scala:253)

at org.apache.spark.rdd.RDD$$anonfun$partitions$2.apply(RDD.scala:251)

at scala.Option.getOrElse(Option.scala:121)

at org.apache.spark.rdd.RDD.partitions(RDD.scala:251)

at org.apache.spark.api.python.PythonRDD.getPartitions(PythonRDD.scala:53)

at org.apache.spark.rdd.RDD$$anonfun$partitions$2.apply(RDD.scala:253)

at org.apache.spark.rdd.RDD$$anonfun$partitions$2.apply(RDD.scala:251)

at scala.Option.getOrElse(Option.scala:121)

at org.apache.spark.rdd.RDD.partitions(RDD.scala:251)

at org.apache.spark.SparkContext.runJob(SparkContext.scala:2092)

at org.apache.spark.rdd.RDD$$anonfun$collect$1.apply(RDD.scala:939)

at org.apache.spark.rdd.RDDOperationScope$.withScope(RDDOperationScope.scala:151)

at org.apache.spark.rdd.RDDOperationScope$.withScope(RDDOperationScope.scala:112)

at org.apache.spark.rdd.RDD.withScope(RDD.scala:363)

at org.apache.spark.rdd.RDD.collect(RDD.scala:938)

at org.apache.spark.api.python.PythonRDD$.collectAndServe(PythonRDD.scala:153)

at org.apache.spark.api.python.PythonRDD.collectAndServe(PythonRDD.scala)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:483)

at py4j.reflection.MethodInvoker.invoke(MethodInvoker.java:244)

at py4j.reflection.ReflectionEngine.invoke(ReflectionEngine.java:357)

at py4j.Gateway.invoke(Gateway.java:282)

at py4j.commands.AbstractCommand.invokeMethod(AbstractCommand.java:132)

at py4j.commands.CallCommand.execute(CallCommand.java:79)

at py4j.GatewayConnection.run(GatewayConnection.java:214)

at java.lang.Thread.run(Thread.java:745)

注意:

此时我是以spark集群跑的:'spark://emaster:7077'

若是以本地跑就可以找到本地的那个test.txt文件

找hdfs文件系统的文件可以找到(在spark集群跑情况下)

。。。处由于字数显示省略了些不重要的错误提示,若想知道的话可以回复我

跪求大神帮助~感激不尽!!!