代码的功能是调用摄像头,然后找出视频帧中的红色区域,再找到其中最大的区域作为ROI。

用笔记本集成的摄像头的时候可以正常跑。

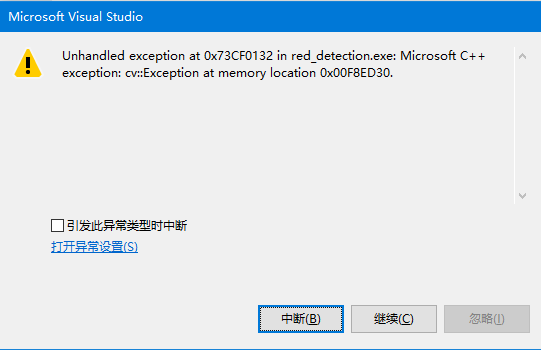

换成usb外接摄像头,编译连接都没问题,就是run的时候崩溃了

//#include "stdafx.h"

#include "opencv2/opencv.hpp"

#include

#include

using namespace std;

using namespace cv;

//漫水填充

void fillHole(const Mat srcBw, Mat &dstBw)

{

Size m_Size = srcBw.size();

Mat Temp = Mat::zeros(m_Size.height + 2, m_Size.width + 2, srcBw.type());//延展图像

srcBw.copyTo(Temp(Range(1, m_Size.height + 1), Range(1, m_Size.width + 1)));

cv::floodFill(Temp, Point(0, 0), Scalar(255,255,255));

//cv::floodFill(Temp, Point(30, 29), Scalar(255, 0, 0), 0, Scalar(10, 10, 10), Scalar(10, 10, 10));

Mat cutImg;//裁剪延展的图像

Temp(Range(1, m_Size.height + 1), Range(1, m_Size.width + 1)).copyTo(cutImg);

dstBw = srcBw | (~cutImg);

}

int main(int argc, char** argv)

{

//定义扫描图像的循环变量

int i = 0;

int j = 0;

//通过摄像头采集视频

VideoCapture capture(0);

//读取视频.

//VideoCapture capture("E:/weixian.mp4");

//视频总帧数

//long totalFrameNumber = capture.get(CV_CAP_PROP_FRAME_COUNT);

//num是一个计数的flag.

int num = 1;

while (1)

//while (num <= totalFrameNumber)

{

//IplImage* frame;

Mat frame1, frame;

//将捕获图像存入frame变量

capture >> frame1;

blur(frame1, frame1, Size(7, 7));

//缩放倍数

double fScale = 0.5;

//目标图像尺寸

CvSize czSize;

czSize.width = frame1.cols*fScale;

czSize.height = frame1.rows*fScale;

cv::resize(frame1, frame, cv::Size(czSize.width, czSize.height), (0, 0), (0, 0), cv::INTER_LINEAR);

cv::Mat rgbImage = frame, hsvImage;

//转换到hsv空间

cv::cvtColor(rgbImage, hsvImage, cv::COLOR_BGR2HSV);

//为了获取图像的尺寸

//这里把图像类型转换

IplImage* tempImage = &IplImage(hsvImage);

IplImage* extractionImage = &IplImage(hsvImage);

IplImage* reversionImage = &IplImage(hsvImage);

IplImage* grayImage = &IplImage(hsvImage);

IplImage* binaryImage = &IplImage(hsvImage);

//显示两个空间下的图像

imshow("RGB", rgbImage);

//imshow("HSV", hsvImage);

for (i = 0; i < tempImage->height; i++)

{

for (j = 0; j < tempImage->width; j++)

{

//获取像素点为(j, i)点的HSV的值

CvScalar s_hsv = cvGet2D(tempImage, i, j);

/*

opencv 的H范围是0~180,红色的H范围大概是(0~8)∪(160,180)

S是饱和度,一般是大于一个值,S过低就是灰色(参考值S>80),

V是亮度,过低就是黑色,过高就是白色(参考值220>V>50)。

*/

CvScalar s;

if (!(((s_hsv.val[0]>0) && (s_hsv.val[0]<8)) || (s_hsv.val[0]>178) && (s_hsv.val[0]<180)))

{

s.val[0] = 0;

s.val[1] = 0;

s.val[2] = 0;

cvSet2D(tempImage, i, j, s);

}

//else这小段是自己加的,如果是红色,就置为白色.

else

{

s.val[0] = 180;

s.val[1] = 30;

s.val[2] = 255;

cvSet2D(tempImage, i, j, s);

}

}

}

//提取红色分量

cvConvert(tempImage, extractionImage);

//cvNamedWindow("Extraction");

//cvShowImage("Extraction", extractionImage);

//颜色空间变换回RGB

cvCvtColor(extractionImage, reversionImage, cv::COLOR_HSV2BGR);

//cvNamedWindow("Reversion");

//cvShowImage("Reversion", reversionImage);

//这里如果转灰度,会出现内存泄漏.

//由于前边定义每个图像都用的取址运算符

//因为grayImge指向的是hsvImage的地址,而后者一直在变

//所以此处gray也一直在变

//RGB图像转灰度图

//cvCvtColor(reversionImage, grayImage, cv::COLOR_BGR2GRAY);

//cvNamedWindow("Gray");

//cvShowImage("Gray", grayImage);

//灰度图转二值图

cvThreshold(grayImage, binaryImage, 100, 255, CV_THRESH_BINARY);

//cvThreshold(reversionImage, binaryImage, 150, 255, CV_THRESH_BINARY);

//cvNamedWindow("Binary");

//cvShowImage("Binary", binaryImage);

//5*5正方形,8位uchar型,全1结构元素

cv::Mat element5(5, 5, CV_8U, cv::Scalar(1));

cv::Mat closed,opened,temp,final,cimage;

//cv::vector<vector<cv::Point>> contours(10000);

temp = Mat(binaryImage);

//高级形态学运算函数

cv::morphologyEx(temp, opened, cv::MORPH_CLOSE, element5);

cv::morphologyEx(temp, closed, cv::MORPH_CLOSE, element5);

//cvNamedWindow("Opened");

//imshow("Opened", opened);

//cvNamedWindow("Closed");

//imshow("Closed", closed);

fillHole(closed, final);

cvNamedWindow("Final");

imshow("Final", final);

Canny(final, cimage, 150, 250);

//cvNamedWindow("Canny");

//imshow("Canny", cimage);

cv::vector<vector<cv::Point>> contours;

vector<Vec4i> hierarchy;

cv::findContours(cimage, contours, CV_RETR_EXTERNAL, CV_CHAIN_APPROX_NONE,Point());

//cv::findContours(cimage, contours, hierarchy, RETR_TREE, CHAIN_APPROX_SIMPLE, Point());

// 寻找最大连通域

double maxArea = 0;

vector<cv::Point> maxContour;

for (size_t i = 0; i < contours.size(); i++)

{

double area = cv::contourArea(contours[i]);

if (area > maxArea)

{

maxArea = area;

maxContour = contours[i];

}

//cout << maxArea << endl;

}

//cout << maxArea << endl;

//cout << contours.size() << endl;

//cout << i << endl;

//cout << maxContour << endl;

// 将轮廓转为矩形框

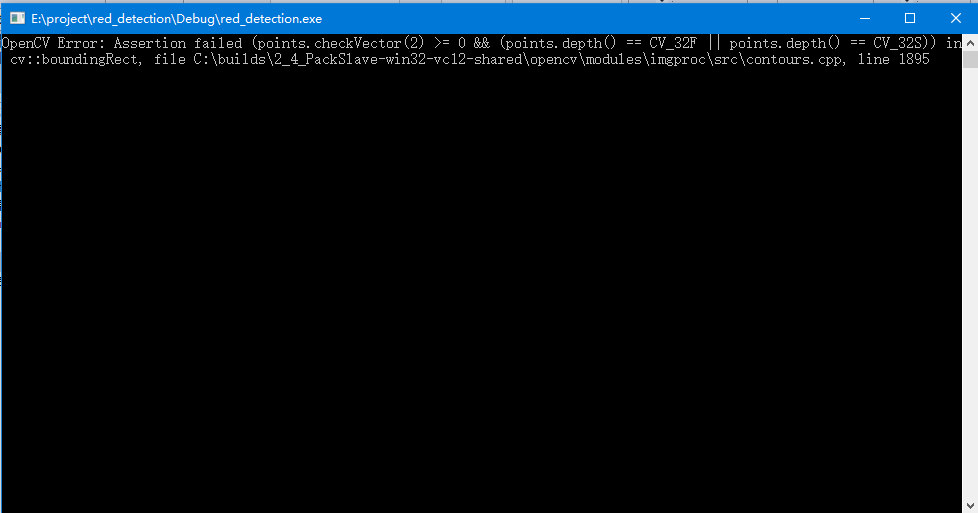

cv::Rect maxRect = cv::boundingRect(maxContour);

// 显示连通域

cv::Mat result1, result2;

final.copyTo(result1);

final.copyTo(result2);

for (size_t i = 0; i < contours.size(); i++)

{

cv::Rect r = cv::boundingRect(contours[i]);

cv::rectangle(result1, r, cv::Scalar(255));

}

//cv::imshow("all regions", result1);

//cv::waitKey();

cv::rectangle(result2, maxRect, cv::Scalar(0, 255, 0), 3);

cv::imshow("largest region", result2);

if (maxArea>8000)

{

cv::imshow("largest region", result2);

}

/*

*/

waitKey(20);

//视频帧数加1.

num = num + 1;

}

return 0;

}

好像是boundingrect那里出错了,但是为什么集成摄像头就可以呢?请教各位