在flink目录执行./bin/yarn-session.sh -n 2 -s 2 -jm 1024 -tm 1024时,启动的时候报2018-12-16 16:01:42,879 ERROR org.apache.flink.yarn.cli.FlinkYarnSessionCli - Error while running the Flink Yarn session.

org.apache.flink.client.deployment.ClusterDeploymentException: Couldn't deploy Yarn session cluster

at org.apache.flink.yarn.AbstractYarnClusterDescriptor.deploySessionCluster(AbstractYarnClusterDescriptor.java:423)

at org.apache.flink.yarn.cli.FlinkYarnSessionCli.run(FlinkYarnSessionCli.java:607)

at org.apache.flink.yarn.cli.FlinkYarnSessionCli.lambda$main$2(FlinkYarnSessionCli.java:810)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:422)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1692)

at org.apache.flink.runtime.security.HadoopSecurityContext.runSecured(HadoopSecurityContext.java:41)

at org.apache.flink.yarn.cli.FlinkYarnSessionCli.main(FlinkYarnSessionCli.java:810)

Caused by: org.apache.flink.yarn.AbstractYarnClusterDescriptor$YarnDeploymentException: The YARN application unexpectedly switched to state FAILED during deployment.

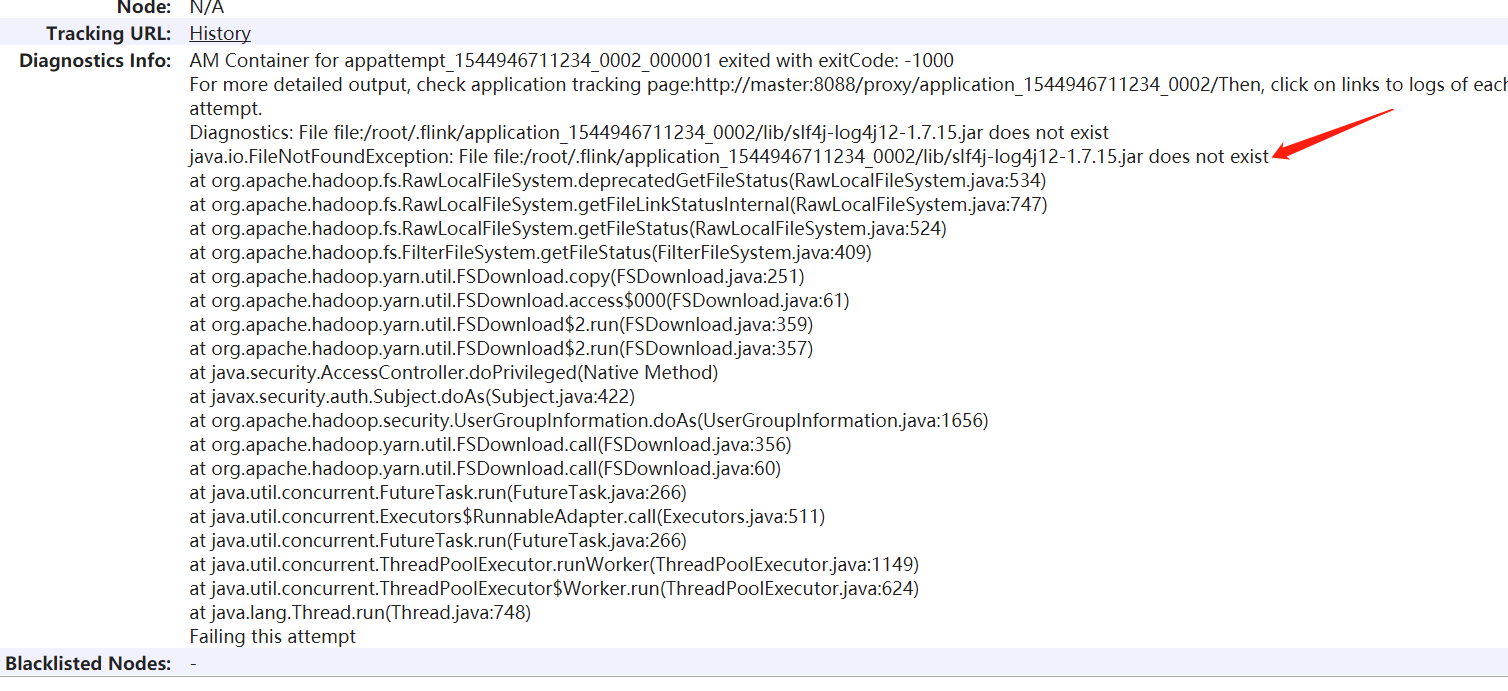

Diagnostics from YARN: Application application_1544946711234_0002 failed 1 times due to AM Container for appattempt_1544946711234_0002_000001 exited with exitCode: -1000

For more detailed output, check application tracking page:http://master:8088/proxy/application_1544946711234_0002/Then, click on links to logs of each attempt.

Diagnostics: File file:/root/.flink/application_1544946711234_0002/lib/slf4j-log4j12-1.7.15.jar does not exist

java.io.FileNotFoundException: File file:/root/.flink/application_1544946711234_0002/lib/slf4j-log4j12-1.7.15.jar does not exist

at org.apache.hadoop.fs.RawLocalFileSystem.deprecatedGetFileStatus(RawLocalFileSystem.java:534)

flink在hadoop yarn运行出错,报相应的jar找不到(self4j)

- 写回答

- 好问题 0 提建议

- 关注问题

- 邀请回答

-