<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>org.example</groupId>

<artifactId>test_mavenSpark</artifactId>

<version>1.0-SNAPSHOT</version>

<properties>

<spark.version>3.0.2</spark.version>

<scala.version>2.12</scala.version>

<maven.compiler.source>16</maven.compiler.source>

<maven.compiler.target>16</maven.compiler.target>

</properties>

<repositories>

<repository>

<id>nexus-aliyun</id>

<name>Nexus aliyun</name>

<url>http://maven.aliyun.com/nexus/content/groups/public</url>

</repository>

</repositories>

<dependencies>

<!-- https://mvnrepository.com/artifact/org.apache.spark/spark-core -->

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-core_2.12</artifactId>

<version>3.0.2</version>

</dependency>

</dependencies>

<build>

<plugins>

<plugin>

<artifactId>maven-assembly-plugin</artifactId>

<version>2.3</version>

<configuration>

<classifier>dist</classifier>

<appendAssemblyId>true</appendAssemblyId>

<descriptorRefs>

<descriptor>jar-with-dependencies</descriptor>

</descriptorRefs>

</configuration>

<executions>

<execution>

<id>make-assembly</id>

<phase>package</phase>

<goals>

<goal>single</goal>

</goals>

</execution>

</executions>

</plugin>

</plugins>

</build>

</project>

求解答?

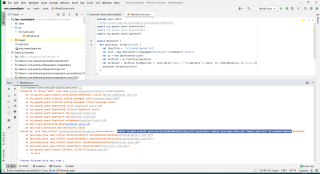

Exception in thread "main" java.lang.ExceptionInInitializerError

at org.apache.spark.unsafe.array.ByteArrayMethods.<clinit>(ByteArrayMethods.java:54)

at org.apache.spark.internal.config.package$.<init>(package.scala:1006)

at org.apache.spark.internal.config.package$.<clinit>(package.scala)

at org.apache.spark.SparkConf$.<init>(SparkConf.scala:639)

at org.apache.spark.SparkConf$.<clinit>(SparkConf.scala)

at org.apache.spark.SparkConf.set(SparkConf.scala:94)

at org.apache.spark.SparkConf.set(SparkConf.scala:83)

at org.apache.spark.SparkConf.setAppName(SparkConf.scala:120)

at main.scala.WordCount$.main(WordCount.scala:10)

at main.scala.WordCount.main(WordCount.scala)

Caused by: java.lang.reflect.InaccessibleObjectException: Unable to make private java.nio.DirectByteBuffer(long,int) accessible: module java.base does not "opens java.nio" to unnamed module @149e0f5d

at java.base/java.lang.reflect.AccessibleObject.checkCanSetAccessible(AccessibleObject.java:357)

at java.base/java.lang.reflect.AccessibleObject.checkCanSetAccessible(AccessibleObject.java:297)

at java.base/java.lang.reflect.Constructor.checkCanSetAccessible(Constructor.java:188)

at java.base/java.lang.reflect.Constructor.setAccessible(Constructor.java:181)

at org.apache.spark.unsafe.Platform.<clinit>(Platform.java:56)

... 10 more