这是我的main.py文件,是视频评估功能的界面,里面有视频播放功能、评估视频功能以及一些按钮和界面基本的标题

import numpy as np

import tkinter as tk

import os

import skvideo.io

from PIL import Image, ImageTk

from cv2 import cv2

from matplotlib.backends.backend_tkagg import FigureCanvasTkAgg

from tkinter import filedialog

from torchvision import transforms

from VSFA import VSFA

from CNNfeatures import get_features

from pylab import *

import torch

from torchvision import transforms

import skvideo.io

from PIL import Image

import numpy as np

from VSFA import VSFA

from CNNfeatures import get_features

from argparse import ArgumentParser

import time

import torch, gc

from matplotlib.backends.backend_tkagg import FigureCanvasTkAgg

import tkinter as tk

from pylab import *

from demo import comp

matplotlib.use('TkAgg')

# 打开视频

def open_file():

videoPath = filedialog.askopenfilename()

video = cv2.VideoCapture(videoPath)

waitTime = 1000 / video.get(5)

videoTime = video.get(7) / video.get(5)

while video.isOpened():

ret, readyFrame = video.read()

if ret == True:

videoFrame = cv2.cvtColor(readyFrame, cv2.COLOR_BGR2RGBA)

newImage = Image.fromarray(videoFrame).resize((520, 320))

newCover = ImageTk.PhotoImage(image=newImage)

videoLable.configure(image=newCover)

videoLable.image = newCover

videoLable.update()

cv2.waitKey(int(waitTime))

else:

break

window = tk.Tk()

window.title('视频质量评估系统')

window.geometry('1080x720')

# 设置视频位置

videoLable = tk.Label(window, width=520, height=320, bd=0)

videoLable.place(x=50, y=200)

# 题目文字

l1 = tk.Label(window, text='视频质量评估系统', bg='white', font=('Arial', 20), width=15, height=2)

l1.place(x=400, y=50)

# 按钮设置

b1 = tk.Button(window, text='打开视频', width=15, height=2, command=open_file)

b1.place(x=200, y=620)

def get_comp():

x, y, z = comp()

c1 = tk.Label(window, text=x, font=('imei', 20), fg='blue', width=50, height=2)

c1.place(x=820, y=420)

c2 = tk.Label(window, text=y, font=('imei', 20, 'italic'), fg='green', width=50, height=2)

c2.place(x=820, y=520)

c3 = tk.Label(window, text=z, font=('imei', 20, 'underline'), fg='red', width=50, height=2)

c3.place(x=820, y=620)

b2 = tk.Button(window, text='测试数据', width=15, height=2, command=get_comp)

b2.place(x=400, y=620)

window.mainloop()

demo.py文件里面放的是评估算法,输出结果有3个,分别是视频长度、视频质量、以及算法运行时间。

import torch

from torchvision import transforms

import skvideo.io

from PIL import Image

import numpy as np

from VSFA import VSFA

from CNNfeatures import get_features

from argparse import ArgumentParser

import time

import torch, gc

from matplotlib.backends.backend_tkagg import FigureCanvasTkAgg

import tkinter as tk

from pylab import *

import os

gc.collect()

torch.cuda.empty_cache

# if torch.cuda.device_count() > 1:

# model = torch.nn.DataParallel(model, device_ids=[1,2,3,4,5,6,7,8])

import time

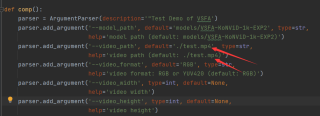

def comp():

parser = ArgumentParser(description='"Test Demo of VSFA')

parser.add_argument('--model_path', default='models/VSFA-KoNViD-1k-EXP2', type=str,

help='model path (default: models/VSFA-KoNViD-1k-EXP2)')

parser.add_argument('--video_path', default='./test.mp4', type=str,

help='video path (default: ./test.mp4)')

parser.add_argument('--video_format', default='RGB', type=str,

help='video format: RGB or YUV420 (default: RGB)')

parser.add_argument('--video_width', type=int, default=None,

help='video width')

parser.add_argument('--video_height', type=int, default=None,

help='video height')

parser.add_argument('--frame_batch_size', type=int, default=32,

help='frame batch size for feature extraction (default: 32)')

args = parser.parse_args(args=[])

device = torch.device("cpu")

start = time.time()

# data preparation

assert args.video_format == 'YUV420' or args.video_format == 'RGB'

if args.video_format == 'YUV420':

video_data = skvideo.io.vread(args.video_path, args.video_height, args.video_width,

inputdict={'-pix_fmt': 'yuvj420p'})

else:

video_data = skvideo.io.vread(args.video_path)

video_length = video_data.shape[0]

video_channel = video_data.shape[3]

video_height = video_data.shape[1]

video_width = video_data.shape[2]

transformed_video = torch.zeros([video_length, video_channel, video_height, video_width])

transform = transforms.Compose([

transforms.ToTensor(),

transforms.Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225])

])

for frame_idx in range(video_length):

frame = video_data[frame_idx]

frame = Image.fromarray(frame)

frame = transform(frame)

transformed_video[frame_idx] = frame

# print(frame_idx)

x = f"Video length:{transformed_video.shape[0]}"

# feature extraction

features = get_features(transformed_video, frame_batch_size=args.frame_batch_size, device=device)

features = torch.unsqueeze(features, 0) # batch size 1

# quality prediction using VSFA

model = VSFA()

model.load_state_dict(torch.load(args.model_path)) #

model.to(device)

model.eval()

with torch.no_grad():

input_length = features.shape[1] * torch.ones(1, 1)

outputs = model(features, input_length)

y_pred = outputs[0][0].to('cpu').numpy()

y = f"Predicted quality:{y_pred}"

end = time.time()

z = f"time:{end - start}"

return x, y, z

# window = tk.Tk()

# # 设置曲线图

# f = Figure(figsize=(8,8), dpi=50)

# a = f.add_subplot(111)

# x = format(end-start)

# y = format(y_pred)

# a.plot(x,y)

# #把绘制的图形显示到tkinter窗口上

# canvas =FigureCanvasTkAgg(f, master=window)

# canvas.draw()

# canvas.get_tk_widget().place(x=620,y=180)

# window.mainloop()

现在的问题是评估时如果想换别的视频进行评估,只能在demo里手动更换视频文件名,现在想在界面的打开视频功能里就能自动更换视频。

注:视频文件名是test.mp4