这是我的代码:

import urllib.request

from bs4 import BeautifulSoup

import re

import codecs

import lxml

import requests

headers = {

'User-Agent':'Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/63.0.3239.132 Safari/537.36 QIHU 360SE'

}

url = "https://www.23apex.com/xiaoshuo/99545.html"

response = requests.get (url,headers=headers)

soup = BeautifulSoup(response.content,'lxml')

f=codecs.open("斗罗大陆外传史莱克天团1111111111.txt","wb","utf-8")

for link in soup.find_all ('a'):

x=link.get('href')

r2=re.compile('/xiaoshuo/99545/',re.I)

if r2.search(x):

print (x)

print (link.text)

url = 'https://www.23apex.com'+x

print (url)

print ("开始爬取....")

response = requests.get (url,headers=headers)

print ("正在解析网页....")

soup = BeautifulSoup(response.content,'lxml')

print ("解析完成!")

a = soup.find(id = 'content')

b = a.get_text()

f.write(link.text)

f.write('\n')

f.write(b)

f.write('\n\n')

print ("本章节爬取完成")

f.close()

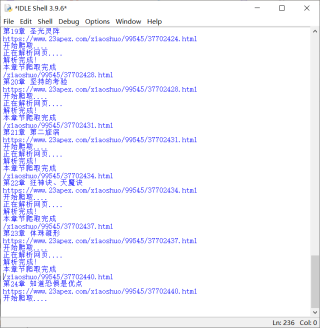

爬几页后,就会出现假死状态。

用的是Python3.9.6