望大神帮帮忙,非常谢谢您的照顾!!!

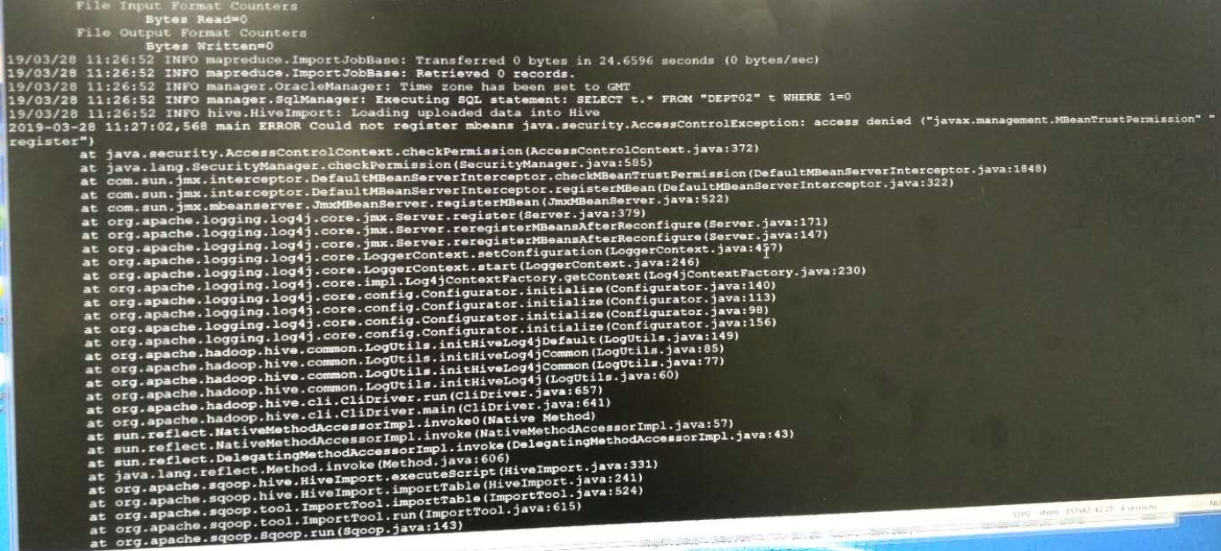

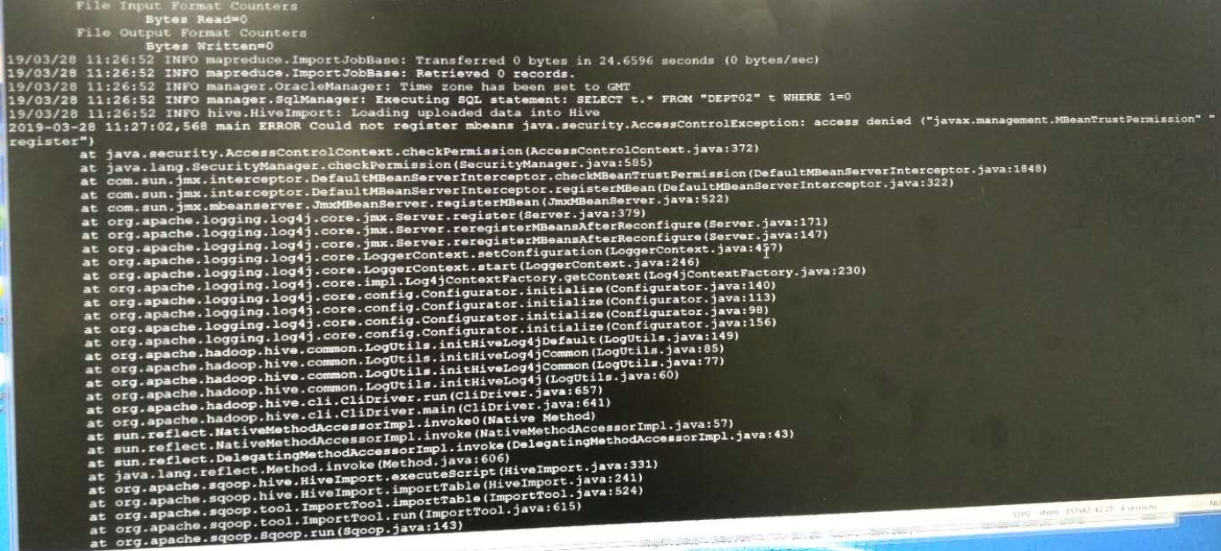

1、下图是报错信息:

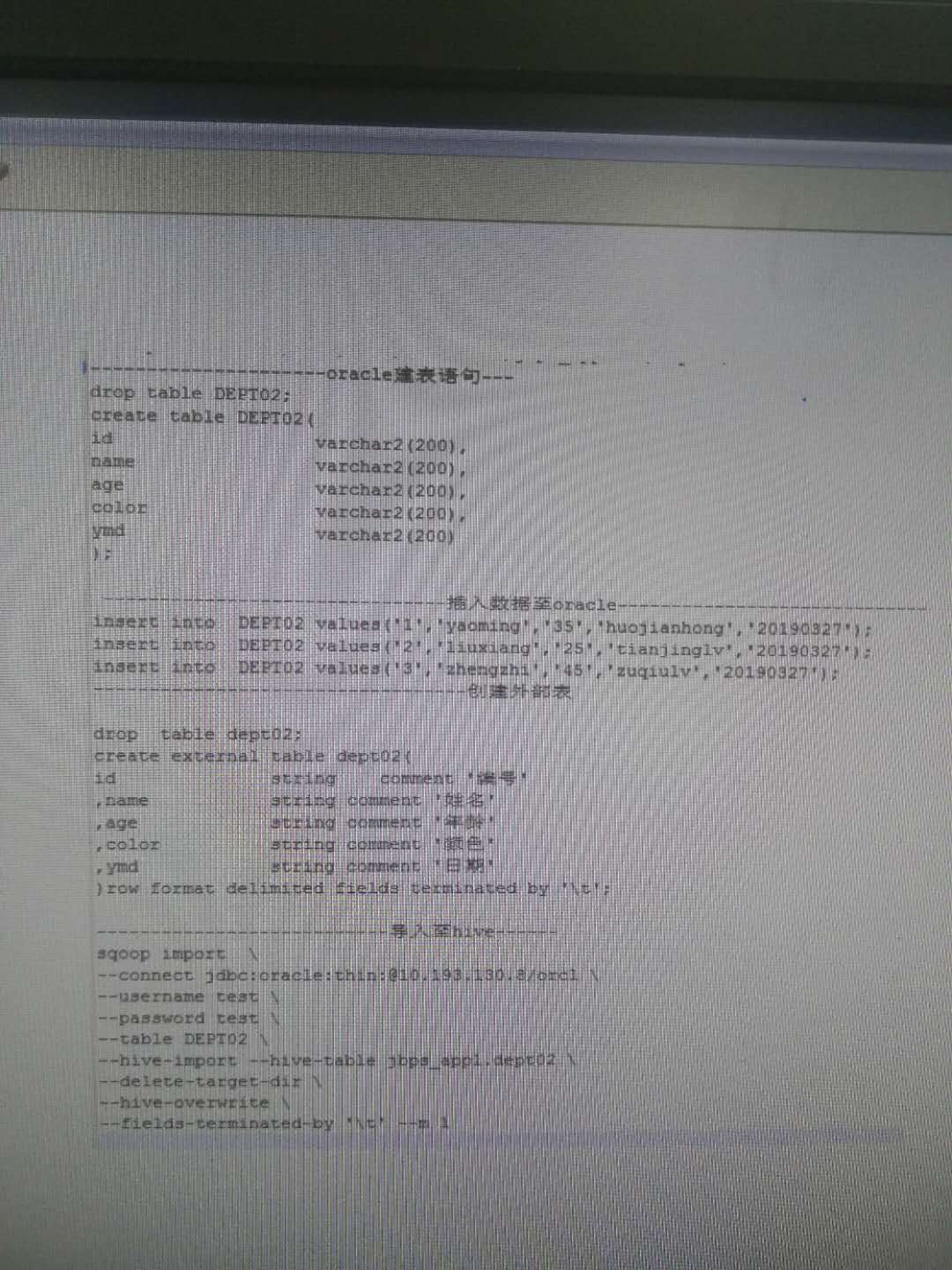

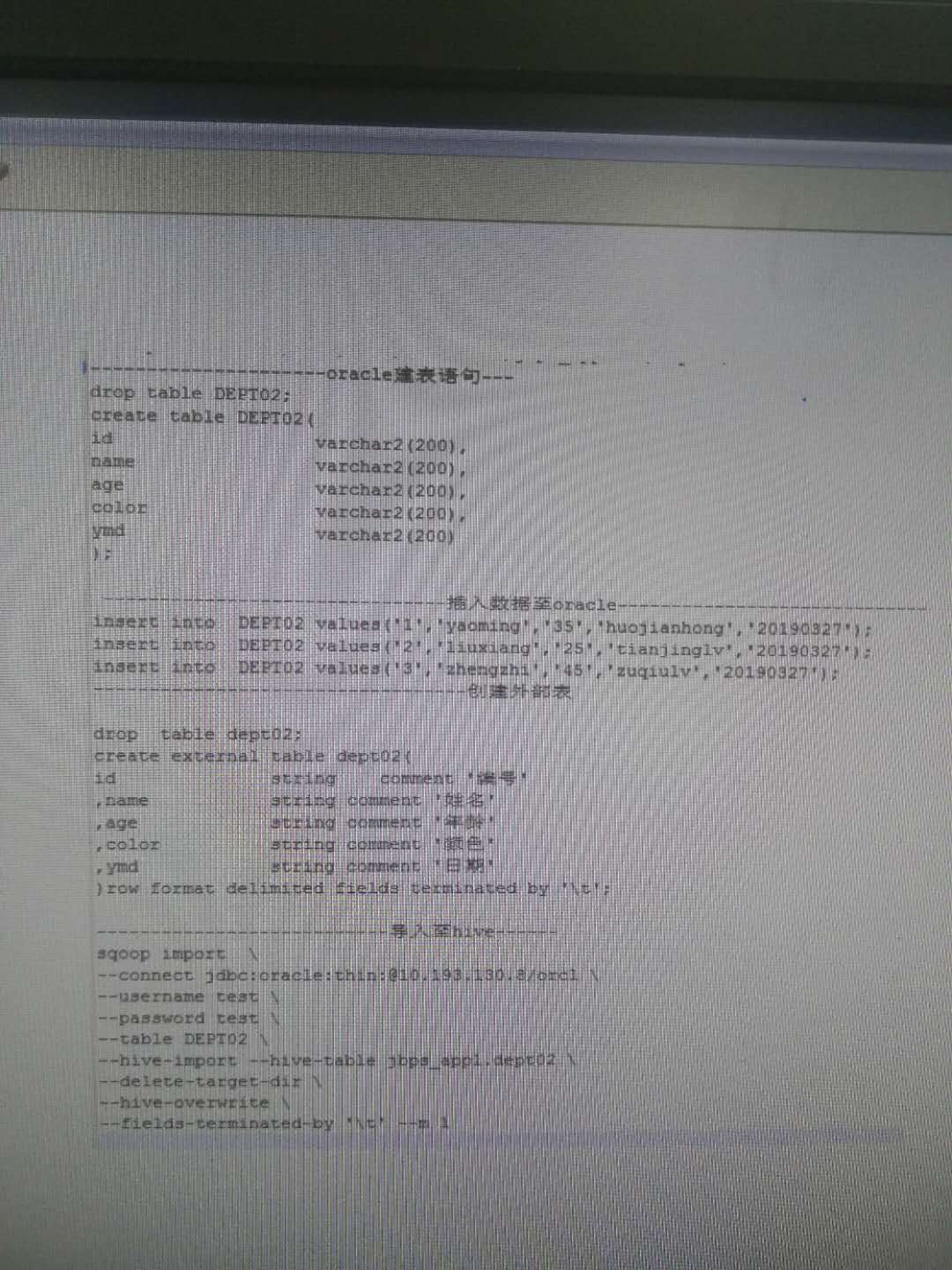

2、下面是我的建表语句,测试数据,sqoop代码

望大神帮帮忙,非常谢谢您的照顾!!!

1、下图是报错信息:

2、下面是我的建表语句,测试数据,sqoop代码

根据报错信息可以看出,你导入数据的时候使用了一个不存在的表名:hive_test。请检查一下建表语句和数据导入命令中表的名称是否一致,并保证表名没有拼写错误等问题。

另外,建议在使用sqoop导入数据时,尽量指定目标表的schema和数据库名称,这样可以避免因为默认值导致的错误。例如,你的建表语句中指定了数据库名称为test,可以在数据导入命令中添加--hive-database test参数来指定导入到test库中。

最后,如果表的列数和数据类型都匹配,建议使用--hive-import参数,这样sqoop会自动创建目标表并导入数据。如果目标表已经存在,可以使用--hive-overwrite参数来覆盖原有数据。