问题 - 图片分类

深度学习刚入门,在跟课程学习水色图片分类时,想尝试用神经网络来进行预测,但是Loss一直都是NAN,正确率也只有第一轮

总共有4种水质,197个样本,且图片均已做过crop和标准化(因为只是好奇想试一下,所以没有做数据增强,每个样本量在50左右)

运行代码

from water_data_preocess import x_CNN, y

from sklearn.model_selection import train_test_split

import tensorflow as tf

x_train, x_test, y_train, y_test = train_test_split(x_CNN, y, train_size=0.8, random_state=23)

max = x_train.max()

min = x_train.min()

def minmaxscaler(data):

data = (data - min)/(max - min)

return data

x_train = tf.constant(minmaxscaler(x_train), dtype=tf.float32)

x_test = tf.constant(minmaxscaler(x_test), dtype=tf.float32)

y_train = tf.constant(y_train, dtype=tf.float32)

y_test = tf.constant(y_test, dtype=tf.float32)

tf.keras.backend.clear_session()

model = tf.keras.models.Sequential()

model.add(tf.keras.layers.Conv2D(filters=32, kernel_size=(3, 3), input_shape=(100, 100, 3), activation='relu'))

model.add(tf.keras.layers.MaxPool2D(pool_size=(2, 2)))

model.add(tf.keras.layers.Conv2D(filters=64, kernel_size=(3, 3), activation='relu'))

model.add(tf.keras.layers.MaxPool2D(pool_size=(2, 2)))

model.add(tf.keras.layers.BatchNormalization())

model.add(tf.keras.layers.Flatten())

model.add(tf.keras.layers.Dense(units=32, activation='relu'))

model.add(tf.keras.layers.Dense(units=4, activation=tf.keras.activations.softmax))

model.compile(optimizer=tf.keras.optimizers.Adam(),

loss=tf.keras.losses.SparseCategoricalCrossentropy(),

metrics=tf.keras.metrics.SparseCategoricalAccuracy())

model.summary()

model.fit(x_train, y_train, epochs=20, batch_size=32)

model.evaluate(x_test, y_test)

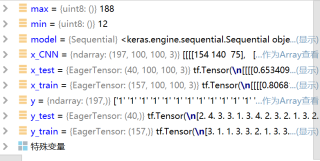

处理后的数据集情况如下:

这是处理后的图片:

检查过了,没有坏样本

运行结果及报错内容

这是前五次的结果:

5/5 [] - 6s 35ms/step - loss: nan - sparse_categorical_accuracy: 0.0318

Epoch 2/20

5/5 [] - 0s 7ms/step - loss: nan - sparse_categorical_accuracy: 0.0000e+00

Epoch 3/20

5/5 [] - 0s 8ms/step - loss: nan - sparse_categorical_accuracy: 0.0000e+00

Epoch 4/20

5/5 [] - 0s 7ms/step - loss: nan - sparse_categorical_accuracy: 0.0000e+00

Epoch 5/20

5/5 [] - 0s 8ms/step - loss: nan - sparse_categorical_accuracy: 0.0000e+00

参考过很多解决Loss消失或爆炸的文章

试过将学习率调整为0,Loss也是第一轮就NAN。。batch_size调整为1的时候也是一样的结果,还试过L2正则化,修改模型等。这个模型是参考做minist识别时的CNN,同样的模型那边能跑出来结果,这边就跑不出来。。