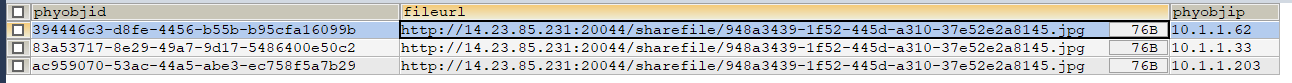

SELECT btp.phy_obj_id AS phyobjid,GROUP_CONCAT(btf.file_url SEPARATOR ',') AS fileurl,btp.phy_obj_ip AS phyobjipFROM b_task_phy btpLEFT JOIN b_task_file btf ON btp.file_id = btf.idWHERE btp.task_id = 9 AND btp.STATUS IN(0,3) GROUP BY btp.phy_obj_id;

上面是一个连表查询的SQL,查询之后返回一个List< Map >

(插播个小问题:怎么让这个List< Map >的尖括号和Map之间没空格又能显示出来,不明白我说啥的可以去提问里输一下.)

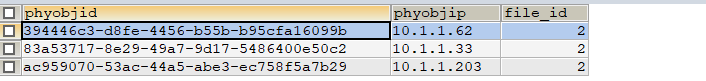

额,回归正题. 现在由于各种原因我不能用连表查询只能单表, soSELECT btp.phy_obj_id AS phyobjid,btp.phy_obj_ip AS phyobjip,btp.`file_id`FROM b_task_phy btpWHERE btp.task_id = 9 AND btp.STATUS IN(0,3) GROUP BY btp.phy_obj_id;

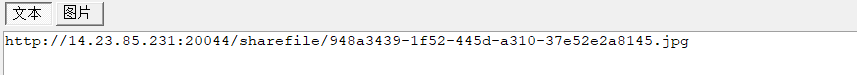

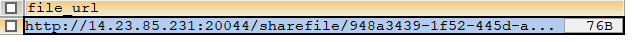

以及SELECT GROUP_CONCAT(file_url SEPARATOR ',') AS file_urlFROM b_task_fileWHERE id IN ( file_id );

IN 里面的值由前一个SQL查询所得的 file_id 组成的集合

前提条件说完了, 我的问题就是用这种单表查询所得到的List< Map > 怎么后台处理(java)得到和连表查询一样的结果. 或者有什么更好的方法.