import os

import asyncio

from pydantic import SecretStr

#ollamba_basurl = "http://localhost:11434"

#model_name = "deepseekr1:7b"

# llm = ChatOllama(

# temperature=0.0,

# model= model_name,

# base_url = ollamba_basurl

# )

llm = ChatOpenAI(

base_url=os.getenv("OPENAI_URL"),

model=os.getenv("QIANWEN_MODEL_NAME"),

api_key=os.getenv("OPENAI_API_KEY")

)

# embeddings = OllamaEmbeddings(model = "quentinz/bge-large-zh-v1.5:lates",

# base_url= ollamba_basurl)

embeddings = OpenAIEmbeddings(

base_url = "https://ark.cn-beijing.volces.com/api/v3",

api_key = os.getenv("OPENAI_EMBEDDING_API_KEY"),

model = "doubao-embedding-large-text-240915"

)

collection = 'pdf_document'

try:

db = Milvus(

embedding_function = embeddings,

collection_name = collection,

text_field="content",

vector_field="embedding",

connection_args={

"host":"192.168.64.145",

"port":"19530",

"user":"root",

"password":"Milvus",

"db_name":"default"

}

)

except Exception as e:

print(f"连接milvus失败 {e}")

raise e

# 创建相似度检索器

retriever = db.as_retriever(

search_type="similarity",

search_kwargs={

"k": 3

}

)

# 构建提示词

prompt = ChatPromptTemplate.from_template(

"""

请根据上下文之间输出最终答案,不要包含任何推理过程,如果不知道答案,请输出不知道

上下文: {content}

问题: {question}

答案:

"""

)

# 构建请求链

setup_retriever = RunnableParallel({"content": retriever, "question" : RunnablePassthrough()})

chain = setup_retriever|prompt | llm | StrOutputParser()

async def get_answer(question):

async for chunk in chain.astream(question):

print(chunk,end = " ",flush= True)

if __name__ == '__main__':

response = chain.invoke("bright grace 下面是什么")

print(response)

# loop = asyncio.get_event_loop()

# asyncio.set_event_loop(loop)

# loop.run_until_complete(get_answer("回答一个你会的问题"))

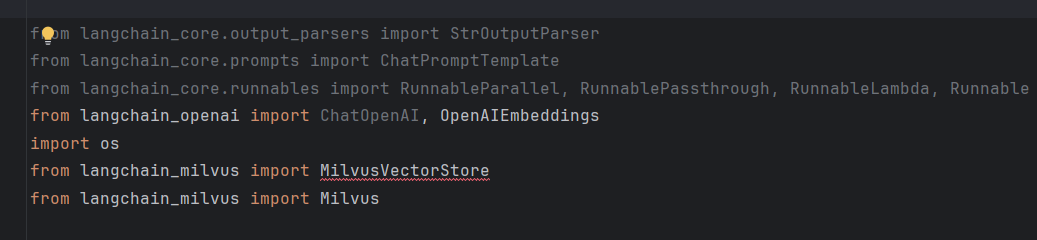

D:\code\python-start-demo\ai\ollama_embedding.py:43: LangChainDeprecationWarning: The class `Milvus` was deprecated in LangChain 0.2.0 and will be removed in 1.0. An updated version of the class exists in the :class:`~langchain-milvus package and should be used instead. To use it run `pip install -U :class:`~langchain-milvus` and import as `from :class:`~langchain_milvus import MilvusVectorStore``.

db = Milvus(

Traceback (most recent call last):

File "D:\code\python-start-demo\ai\ollama_embedding.py", line 86, in <module>

response = chain.invoke("bright grace 下面是什么")

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "D:\ProgramData\conda_envs\myenv\Lib\site-packages\langchain_core\runnables\base.py", line 3045, in invoke

input_ = context.run(step.invoke, input_, config, **kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "D:\ProgramData\conda_envs\myenv\Lib\site-packages\langchain_core\runnables\base.py", line 3774, in invoke

output = {key: future.result() for key, future in zip(steps, futures)}

^^^^^^^^^^^^^^^

File "D:\ProgramData\conda_envs\myenv\Lib\concurrent\futures\_base.py", line 456, in result

return self.__get_result()

^^^^^^^^^^^^^^^^^^^

File "D:\ProgramData\conda_envs\myenv\Lib\concurrent\futures\_base.py", line 401, in __get_result

raise self._exception

File "D:\ProgramData\conda_envs\myenv\Lib\concurrent\futures\thread.py", line 59, in run

result = self.fn(*self.args, **self.kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "D:\ProgramData\conda_envs\myenv\Lib\site-packages\langchain_core\runnables\base.py", line 3758, in _invoke_step

return context.run(

^^^^^^^^^^^^

File "D:\ProgramData\conda_envs\myenv\Lib\site-packages\langchain_core\retrievers.py", line 259, in invoke

result = self._get_relevant_documents(

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "D:\ProgramData\conda_envs\myenv\Lib\site-packages\langchain_core\vectorstores\base.py", line 1079, in _get_relevant_documents

docs = self.vectorstore.similarity_search(query, **_kwargs)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\xieme\AppData\Roaming\Python\Python312\site-packages\langchain_community\vectorstores\milvus.py", line 663, in similarity_search

res = self.similarity_search_with_score(

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "C:\Users\xieme\AppData\Roaming\Python\Python312\site-packages\langchain_community\vectorstores\milvus.py", line 734, in similarity_search_with_score

embedding = self.embedding_func.embed_query(query)

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "D:\ProgramData\conda_envs\myenv\Lib\site-packages\langchain_openai\embeddings\base.py", line 638, in embed_query

return self.embed_documents([text], **kwargs)[0]

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "D:\ProgramData\conda_envs\myenv\Lib\site-packages\langchain_openai\embeddings\base.py", line 590, in embed_documents

return self._get_len_safe_embeddings(

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "D:\ProgramData\conda_envs\myenv\Lib\site-packages\langchain_openai\embeddings\base.py", line 478, in _get_len_safe_embeddings

response = self.client.create(

^^^^^^^^^^^^^^^^^^^

File "D:\ProgramData\conda_envs\myenv\Lib\site-packages\openai\resources\embeddings.py", line 129, in create

return self._post(

^^^^^^^^^^^

File "D:\ProgramData\conda_envs\myenv\Lib\site-packages\openai\_base_client.py", line 1242, in post

return cast(ResponseT, self.request(cast_to, opts, stream=stream, stream_cls=stream_cls))

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

File "D:\ProgramData\conda_envs\myenv\Lib\site-packages\openai\_base_client.py", line 1037, in request

raise self._make_status_error_from_response(err.response) from None

openai.BadRequestError: Error code: 400 - {'error': {'code': 'InvalidParameter', 'message': 'The parameter `input[0]` specified in the request are not valid: expected a string, but got `[73216 21507 40195 28190 21043 6271 222 82696]` instead. Request id: 02174999054139521b512236e6af9dc2992721609ebcc354653b4', 'param': 'input[0]', 'type': 'BadRequest'}}