使用AKShare API时,因频繁请求导致IP被服务器封禁是常见问题。该问题多发生于高频调用接口、未设置合理请求间隔或未使用代理IP的场景中,尤其在批量获取股票、期货等金融数据时更为突出。如何在保证数据采集效率的同时避免IP被封?常见的解决方案包括:添加请求延时(如time.sleep)、使用随机User-Agent、构建IP代理池轮换出口IP,以及通过官方推荐方式接入企业级API服务。此外,合理规划请求频率、缓存已有数据、利用本地数据库减少重复请求也是关键优化手段。

1条回答 默认 最新

关注

关注让【宝码香车】来帮你解答,本回答参考DeepSeek编写,并整理提供。

如果还有疑问可以“评论”。如果答案让您满意,请“采纳”,非常感谢!

针对AKShare API频繁请求导致IP被封的问题,我来为您提供一套完整的解决方案。

核心解决方案

1. 基础防护策略

import time import random import akshare as ak from fake_useragent import UserAgent class SafeAKShareClient: def __init__(self): self.ua = UserAgent() self.min_delay = 1 # 最小延迟秒数 self.max_delay = 3 # 最大延迟秒数 def safe_request(self, func, *args, **kwargs): """安全请求方法""" # 随机延迟 delay = random.uniform(self.min_delay, self.max_delay) time.sleep(delay) # 随机User-Agent headers = { 'User-Agent': self.ua.random } try: return func(*args, **kwargs) except Exception as e: print(f"请求失败: {e}") return None # 使用示例 client = SafeAKShareClient() stock_data = client.safe_request(ak.stock_zh_a_hist, symbol="000001")2. IP代理池方案

import requests from itertools import cycle class ProxyAKShareClient: def __init__(self, proxy_list=None): self.proxies_pool = cycle(proxy_list) if proxy_list else None self.request_count = 0 self.switch_threshold = 50 # 每50次请求切换代理 def get_current_proxy(self): if self.proxies_pool: self.request_count += 1 if self.request_count >= self.switch_threshold: self.request_count = 0 return next(self.proxies_pool) return next(self.proxies_pool) return None def make_request_with_proxy(self, func, *args, **kwargs): proxy = self.get_current_proxy() if proxy: # 设置代理(需要根据AKShare实际情况调整) pass return func(*args, **kwargs) # 代理IP示例列表 proxies = [ "http://user:pass@proxy1.example.com:8080", "http://user:pass@proxy2.example.com:8080", # 添加更多代理... ]3. 智能请求调度器

import threading from queue import Queue from datetime import datetime, timedelta class RequestScheduler: def __init__(self, max_requests_per_minute=30): self.request_queue = Queue() self.max_requests = max_requests_per_minute self.request_timestamps = [] self.lock = threading.Lock() def can_make_request(self): """检查是否允许发起请求""" now = datetime.now() one_minute_ago = now - timedelta(minutes=1) with self.lock: # 清理过期的时间戳 self.request_timestamps = [ ts for ts in self.request_timestamps if ts > one_minute_ago ] if len(self.request_timestamps) < self.max_requests: self.request_timestamps.append(now) return True return False def schedule_request(self, func, *args, **kwargs): """调度请求""" while not self.can_make_request(): # 等待直到可以发起请求 time.sleep(random.uniform(1, 2)) return func(*args, **kwargs)4. 数据缓存机制

import sqlite3 import hashlib import json from datetime import datetime class DataCache: def __init__(self, db_path="akshare_cache.db"): self.db_path = db_path self.init_database() def init_database(self): """初始化数据库""" conn = sqlite3.connect(self.db_path) cursor = conn.cursor() cursor.execute(''' CREATE TABLE IF NOT EXISTS cache ( id INTEGER PRIMARY KEY, request_hash TEXT UNIQUE, data TEXT, created_at TIMESTAMP, expires_at TIMESTAMP ) ''') conn.commit() conn.close() def get_cache_key(self, func_name, params): """生成缓存键""" key_str = f"{func_name}:{json.dumps(params, sort_keys=True)}" return hashlib.md5(key_str.encode()).hexdigest() def get_cached_data(self, func_name, params, cache_minutes=60): """获取缓存数据""" cache_key = self.get_cache_key(func_name, params) conn = sqlite3.connect(self.db_path) cursor = conn.cursor() cursor.execute(''' SELECT data FROM cache WHERE request_hash = ? AND expires_at > ? ''', (cache_key, datetime.now())) result = cursor.fetchone() conn.close() if result: return json.loads(result[0]) return None def set_cached_data(self, func_name, params, data, cache_minutes=60): """设置缓存数据""" cache_key = self.get_cache_key(func_name, params) expires_at = datetime.now() + timedelta(minutes=cache_minutes) conn = sqlite3.connect(self.db_path) cursor = conn.cursor() cursor.execute(''' INSERT OR REPLACE INTO cache (request_hash, data, created_at, expires_at) VALUES (?, ?, ?, ?) ''', (cache_key, json.dumps(data), datetime.now(), expires_at)) conn.commit() conn.close()完整解决方案

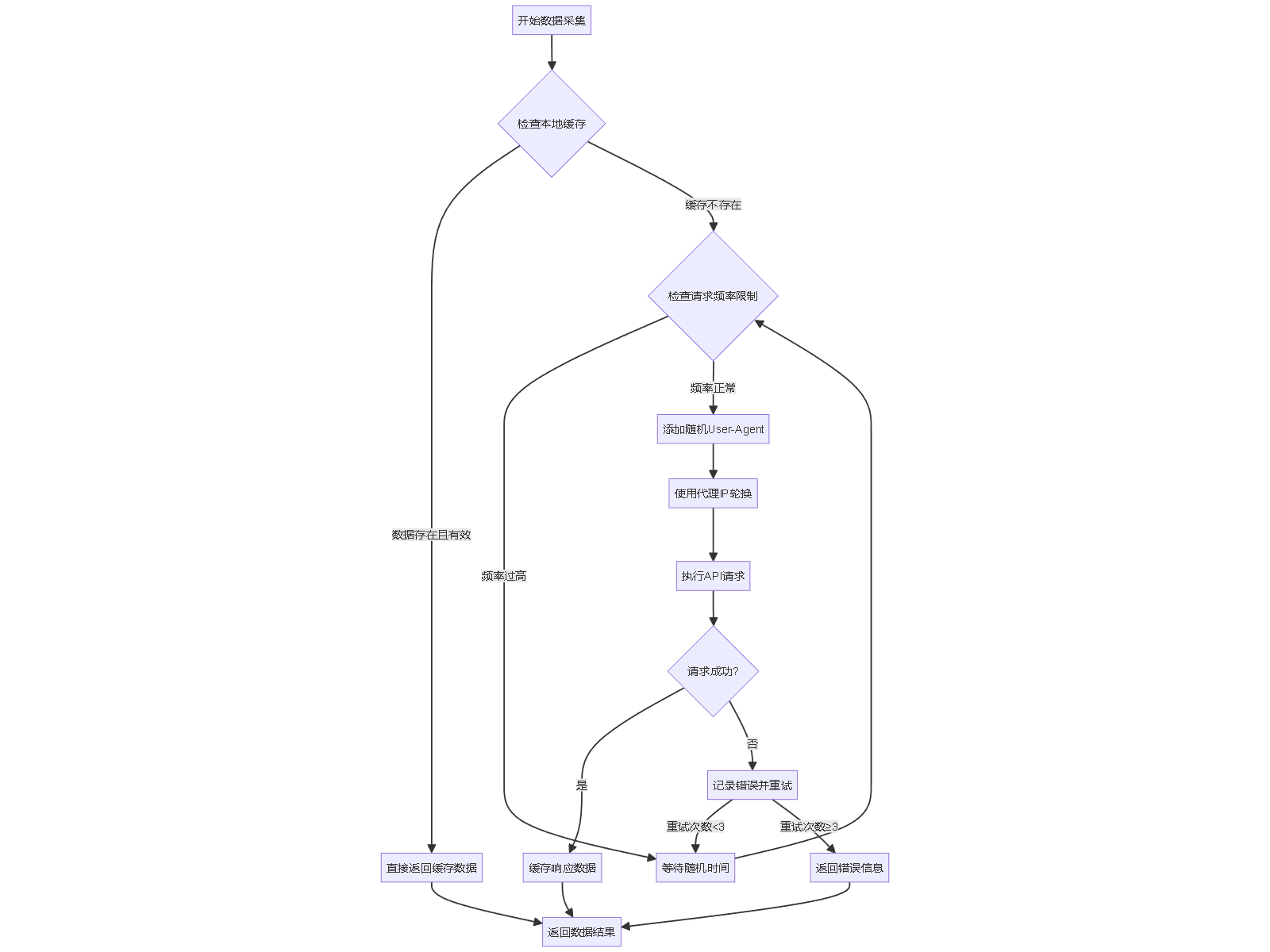

class AdvancedAKShareClient: """高级AKShare客户端""" def __init__(self, config=None): self.config = config or {} self.ua = UserAgent() self.cache = DataCache() self.scheduler = RequestScheduler( max_requests_per_minute=self.config.get('max_requests_per_minute', 30) ) def smart_request(self, func, *args, **kwargs): """智能请求方法""" func_name = func.__name__ params = {'args': args, 'kwargs': kwargs} # 1. 先检查缓存 cached_data = self.cache.get_cached_data(func_name, params) if cached_data: print("从缓存获取数据") return cached_data # 2. 调度请求 print("发起新请求") result = self.scheduler.schedule_request(func, *args, **kwargs) # 3. 缓存结果 if result is not None: self.cache.set_cached_data(func_name, params, result) return result # 使用示例 client = AdvancedAKShareClient({'max_requests_per_minute': 20}) # 批量获取股票数据 stocks = ['000001', '000002', '000858'] for stock in stocks: data = client.smart_request(ak.stock_zh_a_hist, symbol=stock) if data is not None: print(f"成功获取 {stock} 数据")最佳实践流程

配置建议

# config.yaml akshare_client: request_settings: max_requests_per_minute: 30 min_delay_seconds: 1 max_delay_seconds: 5 retry_times: 3 cache_settings: enabled: true default_expire_minutes: 120 db_path: "data/akshare_cache.db" proxy_settings: enabled: false proxy_list: - "http://proxy1.example.com:8080" - "http://proxy2.example.com:8080"关键建议

- 遵守服务条款:了解并遵守AKShare的使用限制

- 分时段采集:避开交易高峰期,在非繁忙时段采集数据

- 增量更新:只请求新增或变更的数据

- 监控预警:设置请求失败监控和预警机制

- 备用数据源:准备备用数据源以防主服务不可用

这套方案可以有效避免IP被封,同时保证数据采集的效率和质量。

评论 打赏 举报解决 1无用