"""MyProblem.py"""

import numpy as np

import geatpy as ea

from sklearn import preprocessing

from sklearn.model_selection import cross_val_score

from multiprocessing.dummy import Pool as ThreadPool

from sklearn import metrics

from sklearn.ensemble import RandomForestRegressor

from sklearn import preprocessing

from sklearn.model_selection import train_test_split

import pandas as pd

from sklearn import model_selection

from sklearn.model_selection import train_test_split

from sklearn.metrics import mean_squared_error#平均绝对误差,越小越好

from sklearn.metrics import explained_variance_score#解释方差分,越近1越好

from sklearn.metrics import mean_absolute_error#中位数绝对误差

from sklearn.metrics import r2_score#越近1,解释程度越高

class MyProblem(ea.Problem): # 继承Problem父类

def __init__(self):

name = 'MyProblem' # 初始化name(函数名称,可以随意设置)

M =1 # 初始化M(目标维数)

maxormins = [-1] # 初始化maxormins(目标最小最大化标记列表,1:最小化该目标;-1:最大化该目标)

Dim = 32 # 初始化Dim(决策变量维数)

varTypes = [0,0,0,0,0,0,0,0,0,0,0,0,0,0,0,0,0,0,0,0,0,0,0,0,0,0,0,0,0,0,0,0] # 初始化varTypes(决策变量的类型,元素为0表示对应的变量是连续的;1表示是离散的)

lb = [1.835,2.55,9.11,0.17,2.028,0.0198,6.0,9.89111,967.684464,5.2597,4.2183,2.52261,3.6183,2.354,2.413,0.433786,0.542036,0.480721,2.245,1.015,2.7709,0.351,0.0,0.418,255.8193102,0.0,0.0,0.0,1.68802,2.55,1.6811,2.55]# 决策变量下界

ub = [ 2.0167,2.676666667,10.5,0.368,2.19,0.13068,7.0,10.6516,1208.4941,10.7293,6.3184,3.133,3.863,2.63018,2.653,0.729645,0.681679,0.628998,2.834,2.164,3.437,0.66,0.41,0.761,374.7309258,34.13703714,34.51384964,73.42387428,2.062256,2.96,1.84,2.87163]# 决策变量上界

lbin =[ 2.0167,2.676666667,10.5,0.368,2.19,0.13068,7.0,10.6516,1208.4941,10.7293,6.3184,3.133,3.863,2.63018,2.653,0.729645,0.681679,0.628998,2.834,2.164,3.437,0.66,0.41,0.761,374.7309258,34.13703714,34.51384964,73.42387428,2.062256,2.96,1.84,2.87163] # 决策变量下边界(0表示不包含该变量的下边界,1表示包含)

ubin = [ 2.0167,2.676666667,10.5,0.368,2.19,0.13068,7.0,10.6516,1208.4941,10.7293,6.3184,3.133,3.863,2.63018,2.653,0.729645,0.681679,0.628998,2.834,2.164,3.437,0.66,0.41,0.761,374.7309258,34.13703714,34.51384964,73.42387428,2.062256,2.96,1.84,2.87163] # 决策变量上边界(0表示不包含该变量的上边界,1表示包含)

# 调用父类构造方法完成实例化

ea.Problem.__init__(self, name, M, maxormins, Dim, varTypes, lb, ub, lbin, ubin)

# 目标函数计算中用到的一些数据

df=pd.read_excel('traindata-0.5D0 - he.xlsx')

data_targets = []

data=df.ix[0:,4:36].values#样本

data=data.tolist()

target_names=df.ix[0:,36].values#类

self.data=data

self.target_names=target_names

def aimFunc(self, pop): # 目标函数

Vars = pop.Phen # 得到决策变量矩阵

pop.ObjV = np.zeros((pop.sizes, 1)) # 初始化种群个体目标函数值列向量

def subAimFunc(i):

from sklearn.model_selection import train_test_split

Xtrain, Xtest, Ytrain, Ytest = train_test_split(self.data,self.target_names,test_size=0.2,random_state=10)

model= RandomForestRegressor(n_estimators=1,random_state=10)

model.fit(Xtrain, Ytrain)

pred=model.predict(Vars)

scores=mean_squared_error(Ytest,pred)

pool=ThreadPool(2)

pool.map(subAimFunc, list(range(pop.sizes)))

pop.ObjV[i] = len(np.where(pop.Phen-pred))

import geatpy as ea

import numpy as np

from MyPromblem import MyProblem

from sklearn.metrics import mean_absolute_error#中位数绝对误差

from sklearn.metrics import mean_squared_error#平均绝对误差,越小越好

from sklearn.metrics import r2_score#越近1,解释程度越高

from sklearn.metrics import explained_variance_score#解释方差分,越近1越好

"""================================实例化问题对象============================="""

problem = MyProblem() # 生成问题对象

"""==================================种群设置================================"""

Encoding = 'RI' # 编码方式

NIND =50 # 种群规模

Field = ea.crtfld(Encoding, problem.varTypes, problem.ranges, problem.borders) # 创建区域描述器

population = ea.Population(Encoding, Field, NIND) # 实例化种群对象(此时种群还没被初始化,仅仅是完成种群对象的实例化)

"""================================算法参数设置==============================="""

myAlgorithm = ea.soea_GGAP_SGA_templet(problem, population) # 实例化一个算法模板对象

myAlgorithm.MAXGEN = 300 # 最大进化代数

myAlgorithm.trappedValue = 1e-6 # “进化停滞”判断阈值

myAlgorithm.maxTrappedCount = 100 # 进化停滞计数器最大上限值,如果连续maxTrappedCount代被判定进化陷入停滞,则终止进化

"""===========================调用算法模板进行种群进化===========================

调用run执行算法模板。NDSet是一个种群类Population的对象。

NDSet.ObjV为最优解个体的目标函数值;NDSet.Phen为对应的决策变量值。

详见Population.py中关于种群类的定义。

"""

myAlgorithm.drawing=2

[population, obj_trace, var_trace] = myAlgorithm.run() # 执行算法模板

Chrom = ea.crtbp(NIND,pop.Lind)

population.save()

print(problem.getReferObjV())

best_gen=np.argmin(problem.maxormins*obj_trace[:,1])

best_ObjV = obj_trace[best_gen, 1]

print('最优的目标函数值为:%s'%(best_ObjV))

print('最优的控制变量值为:')

for i in range(var_trace.shape[1]):

print('有效进化代数:%s'%(obj_trace.shape[0]))

print('最优的一代是第 %s 代'%(best_gen + 1))

print('评价次数:%s'%(myAlgorithm.evalsNum))

print('时间已过 %s 秒'%(myAlgorithm.passTime))

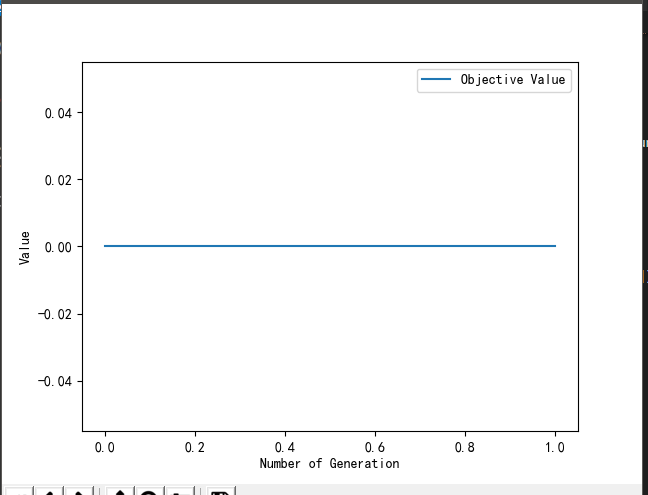

代码运行以后就是这样一根线

请问这样的问题要如何解决