问题相关代码

输入 hive 后 报错:

[root@wuyiling-aliyunhost1 bin]# hive

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/opt/module/tez-0.10.1/lib/slf4j-log4j12-1.7.30.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/opt/hadoop/share/hadoop/common/lib/slf4j-log4j12-1.7.30.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.slf4j.impl.Log4jLoggerFactory]

which: no hbase in (.:/opt/module/maven3.8.3/bin:/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/usr/java8/bin:/opt/hadoop//bin:/opt/hadoop//sbin:/opt/hadoop//bin:/opt/module/hive/bin:/root/bin)

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/opt/module/tez-0.10.1/lib/slf4j-log4j12-1.7.30.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/opt/hadoop/share/hadoop/common/lib/slf4j-log4j12-1.7.30.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.slf4j.impl.Log4jLoggerFactory]

Hive Session ID = 77dc519b-d97c-4dbf-bbe8-3096279f77b8

Logging initialized using configuration in file:/opt/module/hive/conf/hive-log4j2.properties Async: true

Exception in thread "main" java.lang.RuntimeException: java.io.IOException: Previous writer likely failed to write hdfs://wuyiling-aliyunhost1:9000/tmp/hive/root/_tez_session_dir/77dc519b-d97c-4dbf-bbe8-3096279f77b8-resources/hadoop-lzo-0.4.20.0.jar. Failing because I am unlikely to write too.

at org.apache.hadoop.hive.ql.session.SessionState.start(SessionState.java:683)

at org.apache.hadoop.hive.ql.session.SessionState.beginStart(SessionState.java:591)

at org.apache.hadoop.hive.cli.CliDriver.run(CliDriver.java:747)

at org.apache.hadoop.hive.cli.CliDriver.main(CliDriver.java:683)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:497)

at org.apache.hadoop.util.RunJar.run(RunJar.java:323)

at org.apache.hadoop.util.RunJar.main(RunJar.java:236)

Caused by: java.io.IOException: Previous writer likely failed to write hdfs://wuyiling-aliyunhost1:9000/tmp/hive/root/_tez_session_dir/77dc519b-d97c-4dbf-bbe8-3096279f77b8-resources/hadoop-lzo-0.4.20.0.jar. Failing because I am unlikely to write too.

at org.apache.hadoop.hive.ql.exec.tez.DagUtils.localizeResource(DagUtils.java:1191)

at org.apache.hadoop.hive.ql.exec.tez.DagUtils.addTempResources(DagUtils.java:1042)

at org.apache.hadoop.hive.ql.exec.tez.DagUtils.localizeTempFilesFromConf(DagUtils.java:931)

at org.apache.hadoop.hive.ql.exec.tez.TezSessionState.ensureLocalResources(TezSessionState.java:610)

at org.apache.hadoop.hive.ql.exec.tez.TezSessionState.openInternal(TezSessionState.java:287)

at org.apache.hadoop.hive.ql.exec.tez.TezSessionState.beginOpen(TezSessionState.java:256)

at org.apache.hadoop.hive.ql.session.SessionState.start(SessionState.java:680)

... 9 more

问题遇到的现象和发生背景

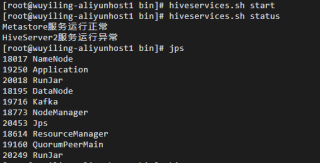

启动 hadoop 和 hiveservice 正常

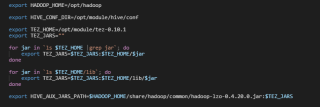

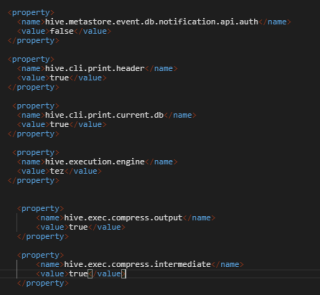

其余配置:

hive-evn.xml

hive-sete.xml

运行结果及报错内容

日志如下:

好像有2种报错

报错1:

2021-11-29T11:11:09,035 INFO [main] handler.ContextHandler: Started o.e.j.s.ServletContextHandler@31b82e0f{/logs,file:///tmp/root/,AVAILABLE}

2021-11-29T11:11:09,041 INFO [main] server.HiveServer2: Web UI has started on port 10002

2021-11-29T11:11:09,041 INFO [main] server.HiveServer2: HS2 interactive HA not enabled. Starting tez sessions..

2021-11-29T11:11:09,041 INFO [main] server.HiveServer2: Starting/Reconnecting tez sessions..

2021-11-29T11:11:09,041 INFO [main] server.HiveServer2: Initializing tez session pool manager

2021-11-29T11:11:09,041 INFO [main] server.AbstractConnector: Started ServerConnector@580fd26b{HTTP/1.1,[http/1.1]}{0.0.0.0:10002}

2021-11-29T11:11:09,041 INFO [main] server.Server: Started @5758ms

2021-11-29T11:11:09,041 INFO [main] http.HttpServer: Started HttpServer[hiveserver2] on port 10002

2021-11-29T11:11:09,062 INFO [main] server.HiveServer2: Tez session pool manager initialized.

2021-11-29T11:11:09,062 INFO [main] server.HiveServer2: Workload management is not enabled.

2021-11-29T11:11:09,062 INFO [main] tez.TezSessionState: Started trigger validator with interval: 500 ms

2021-11-29T11:11:34,049 ERROR [pool-7-thread-1] tez.DagUtils: Could not find the jar that was being uploaded

2021-11-29T11:12:08,516 ERROR [NotificationEventPoll 0] common.FileUtils: Invalid file path file://

java.net.URISyntaxException: Expected authority at index 7: file://

at java.net.URI$Parser.fail(URI.java:2848) ~[?:1.8.0_41]

at java.net.URI$Parser.failExpecting(URI.java:2854) ~[?:1.8.0_41]

at java.net.URI$Parser.parseHierarchical(URI.java:3102) ~[?:1.8.0_41]

at java.net.URI$Parser.parse(URI.java:3053) ~[?:1.8.0_41]

at java.net.URI.<init>(URI.java:588) ~[?:1.8.0_41]

at org.apache.hadoop.hive.common.FileUtils.getURI(FileUtils.java:981) ~[hive-common-3.1.2.jar:3.1.2]

at org.apache.hadoop.hive.common.FileUtils.getJarFilesByPath(FileUtils.java:1005) [hive-common-3.1.2.jar:3.1.2]

at org.apache.hadoop.hive.conf.HiveConf.initialize(HiveConf.java:5198) [hive-common-3.1.2.jar:3.1.2]

at org.apache.hadoop.hive.conf.HiveConf.<init>(HiveConf.java:5104) [hive-common-3.1.2.jar:3.1.2]

at org.apache.hadoop.hive.ql.metadata.Hive.createHiveConf(Hive.java:383) [hive-exec-3.1.2.jar:3.1.2]

at org.apache.hadoop.hive.ql.metadata.Hive.create(Hive.java:372) [hive-exec-3.1.2.jar:3.1.2]

at org.apache.hadoop.hive.ql.metadata.Hive.getInternal(Hive.java:355) [hive-exec-3.1.2.jar:3.1.2]

at org.apache.hadoop.hive.ql.metadata.Hive.get(Hive.java:401) [hive-exec-3.1.2.jar:3.1.2]

at org.apache.hadoop.hive.ql.metadata.Hive.get(Hive.java:397) [hive-exec-3.1.2.jar:3.1.2]

at org.apache.hadoop.hive.ql.metadata.events.NotificationEventPoll$Poller.run(NotificationEventPoll.java:138) [hive-exec-3.1.2.jar:3.1.2]

at java.util.concurrent.Executors$RunnableAdapter.call(Executors.java:511) [?:1.8.0_41]

at java.util.concurrent.FutureTask.runAndReset(FutureTask.java:308) [?:1.8.0_41]

at java.util.concurrent.ScheduledThreadPoolExecutor$ScheduledFutureTask.access$301(ScheduledThreadPoolExecutor.java:180) [?:1.8.0_41]

at java.util.concurrent.ScheduledThreadPoolExecutor$ScheduledFutureTask.run(ScheduledThreadPoolExecutor.java:294) [?:1.8.0_41]

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1142) [?:1.8.0_41]

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:617) [?:1.8.0_41]

at java.lang.Thread.run(Thread.java:745) [?:1.8.0_41]

2021-11-29T11:12:08,523 INFO [NotificationEventPoll 0] metastore.HiveMetaStoreClient: Trying to connect to metastore with URI thrift://wuyiling-aliyunhost1:9083

2021-11-29T11:12:08,524 INFO [NotificationEventPoll 0] metastore.HiveMetaStoreClient: Opened a connection to metastore, current connections: 2

2021-11-29T11:12:08,531 INFO [NotificationEventPoll 0] metastore.HiveMetaStoreClient: Connected to metastore.

2021-11-29T11:12:08,531 INFO [NotificationEventPoll 0] metastore.RetryingMetaStoreClient: RetryingMetaStoreClient proxy=class org.apache.hadoop.hive.ql.metadata.SessionHiveMetaStoreClient ugi=root (auth:SIMPLE) retries=1 delay=1 lifetime=0

2021-11-29T11:12:40,840 INFO [main] conf.HiveConf: Found configuration file file:/opt/module/hive/conf/hive-site.xml

2021-11-29T11:12:41,245 ERROR [main] common.FileUtils: Invalid file path file://

java.net.URISyntaxException: Expected authority at index 7: file://

at java.net.URI$Parser.fail(URI.java:2848) ~[?:1.8.0_41]

at java.net.URI$Parser.failExpecting(URI.java:2854) ~[?:1.8.0_41]

at java.net.URI$Parser.parseHierarchical(URI.java:3102) ~[?:1.8.0_41]

at java.net.URI$Parser.parse(URI.java:3053) ~[?:1.8.0_41]

at java.net.URI.<init>(URI.java:588) ~[?:1.8.0_41]

at org.apache.hadoop.hive.common.FileUtils.getURI(FileUtils.java:981) ~[hive-common-3.1.2.jar:3.1.2]

at org.apache.hadoop.hive.common.FileUtils.getJarFilesByPath(FileUtils.java:1005) [hive-common-3.1.2.jar:3.1.2]

at org.apache.hadoop.hive.conf.HiveConf.initialize(HiveConf.java:5198) [hive-common-3.1.2.jar:3.1.2]

at org.apache.hadoop.hive.conf.HiveConf.<init>(HiveConf.java:5099) [hive-common-3.1.2.jar:3.1.2]

at org.apache.hadoop.hive.common.LogUtils.initHiveLog4jCommon(LogUtils.java:97) [hive-common-3.1.2.jar:3.1.2]

at org.apache.hadoop.hive.common.LogUtils.initHiveLog4j(LogUtils.java:81) [hive-common-3.1.2.jar:3.1.2]

at org.apache.hadoop.hive.cli.CliDriver.run(CliDriver.java:699) [hive-cli-3.1.2.jar:3.1.2]

at org.apache.hadoop.hive.cli.CliDriver.main(CliDriver.java:683) [hive-cli-3.1.2.jar:3.1.2]

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method) ~[?:1.8.0_41]

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62) ~[?:1.8.0_41]

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43) ~[?:1.8.0_41]

at java.lang.reflect.Method.invoke(Method.java:497) ~[?:1.8.0_41]

at org.apache.hadoop.util.RunJar.run(RunJar.java:323) [hadoop-common-3.3.1.jar:?]

at org.apache.hadoop.util.RunJar.main(RunJar.java:236) [hadoop-common-3.3.1.jar:?]

2021-11-29T11:12:41,588 ERROR [main] common.FileUtils: Invalid file path file://

java.net.URISyntaxException: Expected authority at index 7: file://

at java.net.URI$Parser.fail(URI.java:2848) ~[?:1.8.0_41]

at java.net.URI$Parser.failExpecting(URI.java:2854) ~[?:1.8.0_41]

at java.net.URI$Parser.parseHierarchical(URI.java:3102) ~[?:1.8.0_41]

at java.net.URI$Parser.parse(URI.java:3053) ~[?:1.8.0_41]

at java.net.URI.<init>(URI.java:588) ~[?:1.8.0_41]

at org.apache.hadoop.hive.common.FileUtils.getURI(FileUtils.java:981) ~[hive-common-3.1.2.jar:3.1.2]

at org.apache.hadoop.hive.common.FileUtils.getJarFilesByPath(FileUtils.java:1005) [hive-common-3.1.2.jar:3.1.2]

at org.apache.hadoop.hive.conf.HiveConf.initialize(HiveConf.java:5198) [hive-common-3.1.2.jar:3.1.2]

at org.apache.hadoop.hive.conf.HiveConf.<init>(HiveConf.java:5104) [hive-common-3.1.2.jar:3.1.2]

at org.apache.hadoop.hive.cli.CliDriver.run(CliDriver.java:705) [hive-cli-3.1.2.jar:3.1.2]

at org.apache.hadoop.hive.cli.CliDriver.main(CliDriver.java:683) [hive-cli-3.1.2.jar:3.1.2]

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method) ~[?:1.8.0_41]

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62) ~[?:1.8.0_41]

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43) ~[?:1.8.0_41]

at java.lang.reflect.Method.invoke(Method.java:497) ~[?:1.8.0_41]

at org.apache.hadoop.util.RunJar.run(RunJar.java:323) [hadoop-common-3.3.1.jar:?]

at org.apache.hadoop.util.RunJar.main(RunJar.java:236) [hadoop-common-3.3.1.jar:?]

2021-11-29T11:12:41,622 INFO [main] SessionState: Hive Session ID = 77dc519b-d97c-4dbf-bbe8-3096279f77b8

2021-11-29T11:12:41,674 INFO [main] SessionState:

Logging initialized using configuration in file:/opt/module/hive/conf/hive-log4j2.properties Async: true

报错2:

2021-11-29T11:12:41,674 INFO [main] SessionState:

Logging initialized using configuration in file:/opt/module/hive/conf/hive-log4j2.properties Async: true

2021-11-29T11:12:42,310 INFO [main] session.SessionState: Created HDFS directory: /tmp/hive/root/77dc519b-d97c-4dbf-bbe8-3096279f77b8

2021-11-29T11:12:42,329 INFO [main] session.SessionState: Created local directory: /tmp/root/77dc519b-d97c-4dbf-bbe8-3096279f77b8

2021-11-29T11:12:42,335 INFO [main] session.SessionState: Created HDFS directory: /tmp/hive/root/77dc519b-d97c-4dbf-bbe8-3096279f77b8/_tmp_space.db

2021-11-29T11:12:42,361 INFO [main] tez.TezSessionState: User of session id 77dc519b-d97c-4dbf-bbe8-3096279f77b8 is root

2021-11-29T11:12:42,381 INFO [main] tez.DagUtils: Localizing resource because it does not exist: file:/opt/hadoop/share/hadoop/common/hadoop-lzo-0.4.20.0.jar to dest: hdfs://wuyiling-aliyunhost1:9000/tmp/hive/root/_tez_session_dir/77dc519b-d97c-4dbf-bbe8-3096279f77b8-resources/hadoop-lzo-0.4.20.0.jar

2021-11-29T11:12:42,481 WARN [Thread-7] hdfs.DataStreamer: DataStreamer Exception

java.io.IOException: com.google.protobuf.ServiceException: java.lang.NoSuchFieldError: PARSER

at org.apache.hadoop.ipc.ProtobufHelper.getRemoteException(ProtobufHelper.java:71) ~[hadoop-common-3.3.1.jar:?]

at org.apache.hadoop.hdfs.protocolPB.ClientNamenodeProtocolTranslatorPB.addBlock(ClientNamenodeProtocolTranslatorPB.java:516) ~[hadoop-hdfs-client-3.1.3.jar:?]

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method) ~[?:1.8.0_41]

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62) ~[?:1.8.0_41]

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43) ~[?:1.8.0_41]

at java.lang.reflect.Method.invoke(Method.java:497) ~[?:1.8.0_41]

at org.apache.hadoop.io.retry.RetryInvocationHandler.invokeMethod(RetryInvocationHandler.java:422) ~[hadoop-common-3.3.1.jar:?]

at org.apache.hadoop.io.retry.RetryInvocationHandler$Call.invokeMethod(RetryInvocationHandler.java:165) ~[hadoop-common-3.3.1.jar:?]

at org.apache.hadoop.io.retry.RetryInvocationHandler$Call.invoke(RetryInvocationHandler.java:157) ~[hadoop-common-3.3.1.jar:?]

at org.apache.hadoop.io.retry.RetryInvocationHandler$Call.invokeOnce(RetryInvocationHandler.java:95) ~[hadoop-common-3.3.1.jar:?]

at org.apache.hadoop.io.retry.RetryInvocationHandler.invoke(RetryInvocationHandler.java:359) ~[hadoop-common-3.3.1.jar:?]

at com.sun.proxy.$Proxy29.addBlock(Unknown Source) ~[?:?]

at org.apache.hadoop.hdfs.DFSOutputStream.addBlock(DFSOutputStream.java:1081) ~[hadoop-hdfs-client-3.1.3.jar:?]

at org.apache.hadoop.hdfs.DataStreamer.locateFollowingBlock(DataStreamer.java:1866) ~[hadoop-hdfs-client-3.1.3.jar:?]

at org.apache.hadoop.hdfs.DataStreamer.nextBlockOutputStream(DataStreamer.java:1668) ~[hadoop-hdfs-client-3.1.3.jar:?]

at org.apache.hadoop.hdfs.DataStreamer.run(DataStreamer.java:716) ~[hadoop-hdfs-client-3.1.3.jar:?]

Caused by: com.google.protobuf.ServiceException: java.lang.NoSuchFieldError: PARSER

at org.apache.hadoop.ipc.ProtobufRpcEngine$Invoker.getReturnMessage(ProtobufRpcEngine.java:306) ~[hadoop-common-3.3.1.jar:?]

at org.apache.hadoop.ipc.ProtobufRpcEngine$Invoker.invoke(ProtobufRpcEngine.java:278) ~[hadoop-common-3.3.1.jar:?]

at org.apache.hadoop.ipc.ProtobufRpcEngine$Invoker.invoke(ProtobufRpcEngine.java:123) ~[hadoop-common-3.3.1.jar:?]

at com.sun.proxy.$Proxy28.addBlock(Unknown Source) ~[?:?]

at org.apache.hadoop.hdfs.protocolPB.ClientNamenodeProtocolTranslatorPB.addBlock(ClientNamenodeProtocolTranslatorPB.java:514) ~[hadoop-hdfs-client-3.1.3.jar:?]

... 14 more

Caused by: java.lang.NoSuchFieldError: PARSER

at org.apache.hadoop.hdfs.protocol.proto.HdfsProtos$LocatedBlockProto.<init>(HdfsProtos.java:18102) ~[hadoop-hdfs-client-3.1.3.jar:?]

at org.apache.hadoop.hdfs.protocol.proto.HdfsProtos$LocatedBlockProto.<init>(HdfsProtos.java:18018) ~[hadoop-hdfs-client-3.1.3.jar:?]

at org.apache.hadoop.hdfs.protocol.proto.HdfsProtos$LocatedBlockProto$1.parsePartialFrom(HdfsProtos.java:18230) ~[hadoop-hdfs-client-3.1.3.jar:?]

at org.apache.hadoop.hdfs.protocol.proto.HdfsProtos$LocatedBlockProto$1.parsePartialFrom(HdfsProtos.java:18225) ~[hadoop-hdfs-client-3.1.3.jar:?]

at com.google.protobuf.CodedInputStream.readMessage(CodedInputStream.java:309) ~[hive-exec-3.1.2.jar:3.1.2]

at org.apache.hadoop.hdfs.protocol.proto.ClientNamenodeProtocolProtos$AddBlockResponseProto.<init>(ClientNamenodeProtocolProtos.java:17842) ~[hadoop-hdfs-client-3.1.3.jar:?]

at org.apache.hadoop.hdfs.protocol.proto.ClientNamenodeProtocolProtos$AddBlockResponseProto.<init>(ClientNamenodeProtocolProtos.java:17789) ~[hadoop-hdfs-client-3.1.3.jar:?]

at org.apache.hadoop.hdfs.protocol.proto.ClientNamenodeProtocolProtos$AddBlockResponseProto$1.parsePartialFrom(ClientNamenodeProtocolProtos.java:17880) ~[hadoop-hdfs-client-3.1.3.jar:?]

at org.apache.hadoop.hdfs.protocol.proto.ClientNamenodeProtocolProtos$AddBlockResponseProto$1.parsePartialFrom(ClientNamenodeProtocolProtos.java:17875) ~[hadoop-hdfs-client-3.1.3.jar:?]

at com.google.protobuf.AbstractParser.parseFrom(AbstractParser.java:89) ~[hive-exec-3.1.2.jar:3.1.2]

at com.google.protobuf.AbstractParser.parseFrom(AbstractParser.java:95) ~[hive-exec-3.1.2.jar:3.1.2]

at com.google.protobuf.AbstractParser.parseFrom(AbstractParser.java:49) ~[hive-exec-3.1.2.jar:3.1.2]

at org.apache.hadoop.ipc.RpcWritable$ProtobufWrapperLegacy.readFrom(RpcWritable.java:170) ~[hadoop-common-3.3.1.jar:?]

at org.apache.hadoop.ipc.RpcWritable$Buffer.getValue(RpcWritable.java:232) ~[hadoop-common-3.3.1.jar:?]

at org.apache.hadoop.ipc.ProtobufRpcEngine$Invoker.getReturnMessage(ProtobufRpcEngine.java:297) ~[hadoop-common-3.3.1.jar:?]

at org.apache.hadoop.ipc.ProtobufRpcEngine$Invoker.invoke(ProtobufRpcEngine.java:278) ~[hadoop-common-3.3.1.jar:?]

at org.apache.hadoop.ipc.ProtobufRpcEngine$Invoker.invoke(ProtobufRpcEngine.java:123) ~[hadoop-common-3.3.1.jar:?]

at com.sun.proxy.$Proxy28.addBlock(Unknown Source) ~[?:?]

at org.apache.hadoop.hdfs.protocolPB.ClientNamenodeProtocolTranslatorPB.addBlock(ClientNamenodeProtocolTranslatorPB.java:514) ~[hadoop-hdfs-client-3.1.3.jar:?]

... 14 more

2021-11-29T11:12:42,483 INFO [main] tez.DagUtils: Looks like another thread or process is writing the same file

2021-11-29T11:12:42,484 INFO [main] tez.DagUtils: Waiting for the file hdfs://wuyiling-aliyunhost1:9000/tmp/hive/root/_tez_session_dir/77dc519b-d97c-4dbf-bbe8-3096279f77b8-resources/hadoop-lzo-0.4.20.0.jar (5 attempts, with 5000ms interval)

2021-11-29T11:13:07,499 ERROR [main] tez.DagUtils: Could not find the jar that was being uploaded

我的解答思路和尝试过的方法

原来只有报错2的,经过我3天的不懈努力,多了报错1

/(ㄒoㄒ)/~~