问题遇到的现象和发生背景

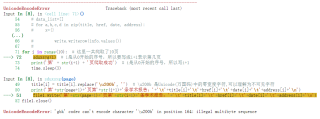

爬虫信息储存txt文件时报错'gbk' codec can't encode character '\u200b' in position 164: illegal multibyte sequence

用代码块功能插入代码,请勿粘贴截图

import requests

import re

import time

import csv

headers = {

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/80.0.3987.87 Safari/537.36 SE 2.X MetaSr 1.0',

'Cookie': 'BIDUPSID=A87FDC113E9F5C879F4BEA4D7D6F5A72; PSTM=1662346944; BD_UPN=12314753; newlogin=1; BDUSS=40SGNtOGUzSFh2NHFTSi0zZW9Pa0pIeE5NUnB6Ymt0RDdIUGdqVTVDaUpWMTlqRVFBQUFBJCQAAAAAAAAAAAEAAACJedgyQUHHo8POAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAInKN2OJyjdjSU; BDUSS_BFESS=40SGNtOGUzSFh2NHFTSi0zZW9Pa0pIeE5NUnB6Ymt0RDdIUGdqVTVDaUpWMTlqRVFBQUFBJCQAAAAAAAAAAAEAAACJedgyQUHHo8POAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAInKN2OJyjdjSU; BAIDUID=A87FDC113E9F5C878BD59310E3A6E04A:SL=0:NR=10:FG=1; ispeed_lsm=2; BDORZ=FFFB88E999055A3F8A630C64834BD6D0; sug=3; sugstore=1; ORIGIN=0; bdime=0; BAIDUID_BFESS=A87FDC113E9F5C878BD59310E3A6E04A:SL=0:NR=10:FG=1; Hm_lvt_aec699bb6442ba076c8981c6dc490771=1665671890,1665745286; Hm_lpvt_aec699bb6442ba076c8981c6dc490771=1665745286; delPer=0; BD_CK_SAM=1; PSINO=5; BA_HECTOR=212024a12ha5a0010h84886k1hkigg61a; ZFY=:BKhQhsIdmwipwi9PbQ4h5ytjGOPXDsCSQfVRTuUcXVE:C; baikeVisitId=af3dc6a3-770a-4941-ad83-ab9dd0ce59ae; COOKIE_SESSION=129_0_1_0_8_1_1_0_1_1_0_0_129_0_1_0_1665745416_0_1665745415%7C5%230_0_1665745415%7C1; H_PS_645EC=9813r29D6TCK%2BXRVz5TlZby%2BLvNs6AnvuSOkr76NyC4OTdjCvtetKIWOu%2FPQSLqexz77iV8tlV4L; BDRCVFR[C0p6oIjvx-c]=mk3SLVN4HKm; H_PS_PSSID=37568_36551_37551_37358_37396_36807_37405_36789_37538_37497_37508_22159_37570; BDSVRTM=955'

}

url = 'https://www.baidu.com/s?rtt=1&bsst=1&cl=2&tn=news&ie=utf-8&word=阿里巴巴'

res = requests.get(url, headers=headers)

res.encoding = 'utf-8'

# 爬取一个公司的多页

def sduxsyg(page):

if page==0:

url = 'https://www.view.sdu.edu.cn/xsyg.htm'

else:

url = 'https://www.view.sdu.edu.cn/xsyg/'+ str(177-page)+'.htm'

res = requests.get(url, headers=headers)

res.encoding = 'utf-8'

res=res.text

# 其他相关爬虫代码

p_title = '运行结果及报错内容

我想要达到的结果

想知道为啥错了,该怎么修改