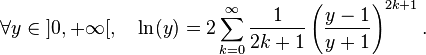

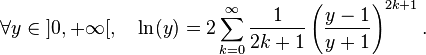

Well you would have the Taylor series, that can be rewritten for better convergence

To transform this nice equality into an algorithm, you have to understand how a converging series work : each term is smaller and smaller. This decrease happens fast enough so that the total sum is a finite value : ln(y).

Because of nice properties of the real numbers, you may consider the sequence converging to ln(y) :

- L(1) = 2/1 * (y-1)/(y+1)

- L(2) = 2/1 * (y-1)/(y+1) + 2/3 * ( (y-1)/(y+1) )^3

- L(3) = 2/1 * (y-1)/(y+1) + 2/3 * ( (y-1)/(y+1) )^3 + 2/5 * ( (y-1)/(y+1) )^5

.. and so on.

Obviously, the algorithm to compute this sequence is easy :

x = (y-1)/(y+1);

z = x * x;

L = 0;

k = 0;

for(k=1; x > epsilon; k+=2)

{

L += 2 * x / k;

x *= z;

}

At some point, your x will become so small that it will not contribute to the interesting digits of L anymore, instead only modifying the much smaller digits. When these modifications start to be too insignificant for your purposes, you may stop.

Thus if you want to achieve a precision 1e^-20, set epsilon to be reasonably smaller than that, and you're good to go.

Don't forget to factorize within the log if you can. If it's a perfect square for example, ln(a²) = 2 ln(a)

Indeed, the series will converge faster when (y-1)/(y+1) is smaller, thus when y is smaller (or rather, closer to 1, but that should be equivalent if you're planning on using integers).