怎么解决torch.OutOfMemoryError: CUDA out of memory.

【报错信息】

torch.OutOfMemoryError: CUDA out of memory. Tried to allocate 1.18 GiB. GPU 0 has a total capacity of 23.70 GiB of which 263.69 MiB is free. Including non-PyTorch memory, this process has 23.44 GiB memory in use. Of the allocated memory 21.80 GiB is allocated by PyTorch, and 240.61 MiB is reserved by PyTorch but unallocated. If reserved but unallocated memory is large try setting PYTORCH_CUDA_ALLOC_CONF=expandable_segments:True to avoid fragmentation. See documentation for Memory Management (https://pytorch.org/docs/stable/notes/cuda.html#environment-variables)

【问题描述】

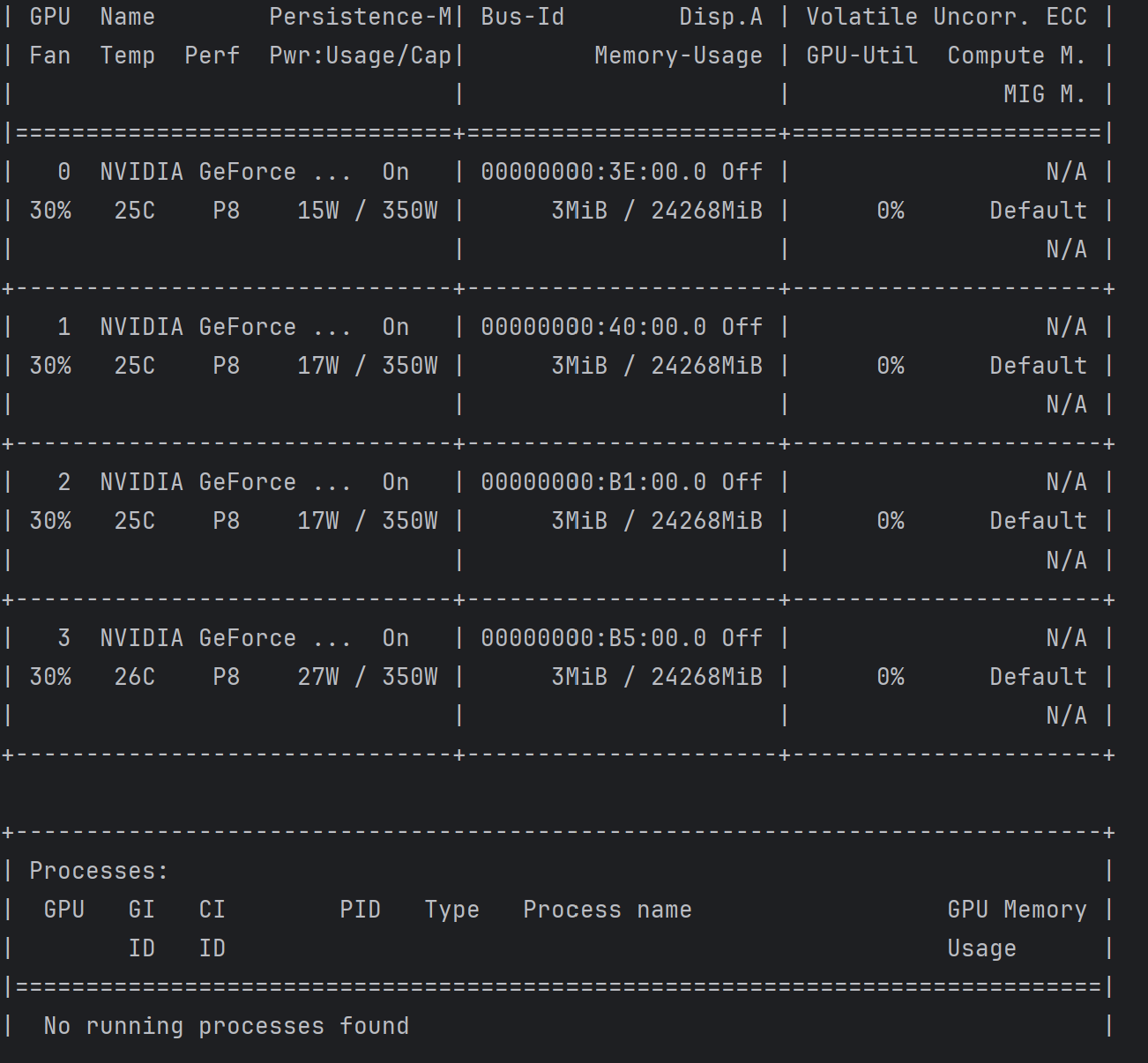

在服务器上运行程序时,会报上述错误,显示CUDA out of memory。通过nvidia-smi查看,服务器上并没有程序在运行,这是为什么?应该怎么解决呢?