#定义数据集:

x = torch.randn((200,1))*10

y = x*50+12

y_ = y + torch.normal(10,30,size= (200,1)) #加入噪音

#定义损失函数:

def MSE_loss(y_,y):

MSE = torch.sum(torch.square(y-y_))/y.size()[0]

loss_ = MSE

return loss_

#定义网络及迭代方式

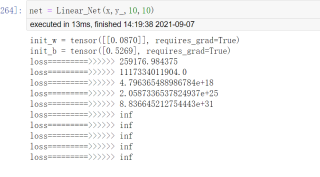

def Linear_Net(x,y,lr = 0.02,epochs = 10):

#导入必备库:

import torch

import torch.nn as nn

#提取size:

input_size = x.size()[0]

input_features = x.size()[1]

#初始化参数:

w = torch.rand((1,1),requires_grad=True)

b = torch.rand(1,requires_grad=True)

loss_ = []

loss_internal = nn.MSELoss()

print("init_w =",w)

print("init_b =",b)

#计算及迭代:

for i in range(epochs):

y_hat = torch.mm(x,w)+b

loss = MSE_loss(y_hat,y) #使用自带损失函数

# loss = loss_internal(y,y_hat) #使用系统损失函数

loss.backward()

#for param in [w,b]:

#param.data -= -lr* param.grad

w.data = w.data-lr* w.grad

b.data = w.data-lr* b.grad

#梯度清零,否则梯度累加

w.grad.data.zero_()

b.grad.data.zero_()

loss_.append(loss.item())

#print("w============>>>>>>",w)

#print("b============>>>>>>",b)

print("loss=========>>>>>>",loss.item())

#导出结果:

结果如下: