optics图像聚类簇数过多

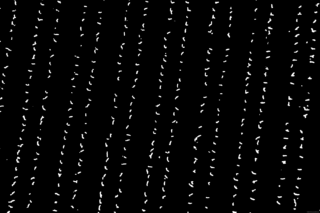

被要求对类似以下图片做基于距离的聚类

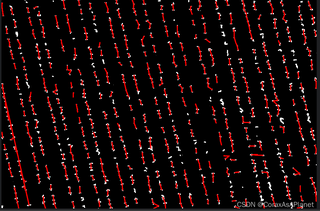

之后我使用optics在多轮调参后始终无法使每一列聚为一类,总是断断续续的

我想让他一列能连出一条线,这个该怎么修改。

以下是主要代码

import os

import math

import shutil

import cv2

import numpy as np

import ssd

from sklearn.cluster import DBSCAN, OPTICS

from tqdm import tqdm

from sklearn.cluster import AgglomerativeClustering

import scipy.spatial.distance as ssd

def point_to_line_distance(x1, y1, slope, x2, y2):

A = slope

B = -1

C = y1 - slope * x1

distance = abs(A * x2 + B * y2 + C) / math.sqrt(A ** 2 + B ** 2)

return distance

def angel_distance(angel1, angel2):

return 180 - abs(abs(angel1 - angel2) - 180)

def point_distance(x1, y1, x2, y2):

return math.sqrt((x2 - x1) ** 2 + (y2 - y1) ** 2)

def merge_close_clusters(points, labels, distance_threshold=50):

# 根据标签对点进行分组

clusters = {}

for label, point in zip(labels, points):

if label not in clusters:

clusters[label] = []

clusters[label].append(point)

# 合并距离小于阈值的簇

for label1, points1 in clusters.items():

for label2, points2 in clusters.items():

if label1 < label2: # 避免重复合并

for point1 in points1:

for point2 in points2:

if point_distance(point1[0], point1[1], point2[0], point2[1]) < distance_threshold:

# 合并簇label1到label2

for i in range(len(labels)):

if labels[i] == label1:

labels[i] = label2

break

return labels

def merge_components(comp1, comp2):

x1, y1 = comp1[0]

w1, h1, area1, (cx1, cy1) = comp1[1:]

x2, y2 = comp2[0]

w2, h2, area2, (cx2, cy2) = comp2[1:]

# 合并边界框

x = min(x1, x2)

y = min(y1, y2)

w = max(x1 + w1, x2 + w2) - x

h = max(y1 + h1, y2 + h2) - y

# 合并面积

area = area1 + area2

# 计算新的质心

cx = (cx1 * area1 + cx2 * area2) / area

cy = (cy1 * area1 + cy2 * area2) / area

return [(x, y), w, h, area, (int(cx), int(cy))]

# 文件夹路径

folder_path = r'E:\image/' # 替换为你的图像路径

output_folder = 'temp/'

# 如果输出文件夹不存在,则创建

if os.path.exists(output_folder):

shutil.rmtree(output_folder)

os.makedirs(output_folder)

# 获取文件夹中所有图像路径

images_path = os.listdir(folder_path)

images_path = [os.path.join(folder_path, image_path) for image_path in images_path]

for image_path in tqdm(images_path):

# 读取图像

image = cv2.imread(image_path)

# 读取二值化图像

binary_image = cv2.imread(image_path, cv2.IMREAD_GRAYSCALE)

# 进行连通组件标记

num_labels, labels, stats, centroids = cv2.connectedComponentsWithStats(binary_image, connectivity=8)

# 遍历所有组件(第一个组件是背景,所以从1开始)

components_list = []

for i in range(1, num_labels):

# 获取组件的统计信息

x, y, w, h, area = stats[i]

if area <= 100:

continue

cx, cy = centroids[i]

cx = int(cx)

cy = int(cy)

# 绘制中心点

# cv2.circle(image, (cx, cy), 3, (0, 0, 255), -1)

components_list.append([(x, y), w, h, area, (cx, cy)])

# 合并距离小于50像素的组件

while True:

merged = False

for i in range(len(components_list)):

for j in range(i + 1, len(components_list)):

cx1, cy1 = components_list[i][4]

cx2, cy2 = components_list[j][4]

if point_distance(cx1, cy1, cx2, cy2) < 50:

new_component = merge_components(components_list[i], components_list[j])

components_list[i] = new_component

del components_list[j]

merged = True

break

if merged:

break

if not merged:

break

# 使用 OPTICS 聚类算法

# 提取质心坐标

points = np.array([component[4] for component in components_list])

# 使用 OPTICS 聚类算法

optics = OPTICS(min_samples=0.0025,xi=0.008, max_eps=300,metric='manhattan')

labels = optics.fit_predict(points)

# 检查聚类结果

# 打印聚类结果的统计信息

unique_labels = np.unique(labels)

print("Clustered labels:", unique_labels)

for label in unique_labels:

if label == -1:

continue # 忽略噪声点

print(f"Cluster {label} has {len(points[labels == label])} points")

new_labels = merge_close_clusters(points, labels)

for label in np.unique(new_labels):

if label == -1:

continue # 忽略噪声点

cluster_points = points[new_labels == label]

if len(cluster_points) > 1:

sorted_points = sorted(cluster_points, key=lambda point: point[1])

for i in range(1, len(sorted_points)):

start_point = (int(sorted_points[i - 1][0]), int(sorted_points[i - 1][1]))

end_point = (int(sorted_points[i][0]), int(sorted_points[i][1]))

cv2.line(image, start_point, end_point, (0, 0, 255), 20)

# 保存图像

output_path = os.path.join(output_folder, os.path.basename(image_path))

cv2.imwrite(output_path, image)

else:

print("No valid clusters found with current parameters. Adjusting parameters may be needed.")