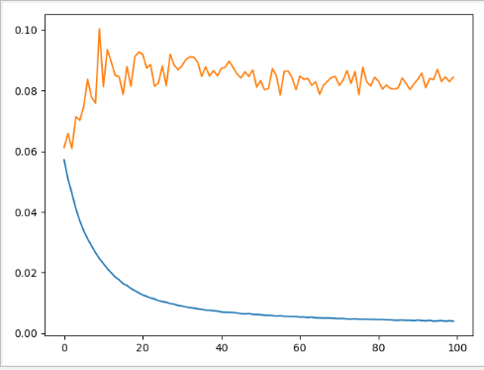

做的8推1的时序预测,但是不管怎么修改模型,一直都是过拟合的状态,loss下降 val loss上升,val loss一点下降趋势也没有,直接往上走,

已经用了dropout(0.5)也试过用BatchNormalization(),但都没办法完全改善

想知道到底问题出在哪里

训练集和测试集(x,y)大小是(80000, 10, 8) (25000, 10, 8) (80000,) (25000,)

#数据整理

train = values[0:timestep*num]

valid =values[timestep*num:]

time_stamp = 10

scaled_data = scaler.fit_transform(train)

x_train, y_train = [], []

for i in range(time_stamp, len(train)):

x_train.append(scaled_data[i - time_stamp:i,0:8])

y_train.append(scaled_data[i, 8])

# y_train.append(scaled_data[i - time_stamp, 8])

# y_train.append(scaled_data[i - time_stamp:i, 8])

x_train, y_train = np.array(x_train), np.array(y_train)

scaled_data = scaler.fit_transform(valid)

x_valid, y_valid = [], []

for i in range(time_stamp, len(valid)):

x_valid.append(scaled_data[i - time_stamp:i,0:8])

y_valid.append(scaled_data[i, 8])

# y_valid.append(scaled_data[i - time_stamp, 8])

# y_valid.append(scaled_data[i - time_stamp:i, 8])

x_valid, y_valid = np.array(x_valid), np.array(y_valid)

print(x_train.shape,x_valid.shape, y_train.shape, y_valid.shape)

#lstm模型

epochs = 60

batch_size = 256

model = Sequential()

model.add(LSTM(units=128, return_sequences=True, input_dim=x_train.shape[-1], input_length=x_train.shape[1]))

model.add(Dropout(0.5))

model.add(LSTM(units=64))

model.add(Dropout(0.5))

model.add(Dense(1))

model.compile(loss='mean_squared_error', optimizer='rmsprop')

history =model.fit(x_train, y_train, epochs=epochs, batch_size=batch_size,validation_data=(x_valid,y_valid), verbose=2)

上图中蓝色为loss曲线,黄色为val loss