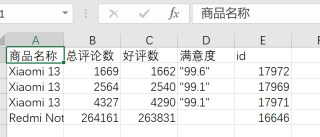

为什么只能爬到一点点数据呢,怎么才能爬到全部数据

import requests

from bs4 import BeautifulSoup

from selenium import webdriver

import time

import csv

url1 = 'https://www.mi.com/shop/category/list'

headers1 = {'user-agent': 'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/94.0.4606.71 Safari/537.36 Core/1.94.188.400 QQBrowser/11.4.5225.400'}

respost1 = requests.get(url1, headers=headers1).text

res = BeautifulSoup(respost1, 'html.parser')

lst = [] # 商品名称

for i in res.find_all('span')[16:20]: # 16:277 获取全数据

b = i.string

lst.append(b)

lst2 = [] # 获取到所有链接

lst4 = []

for i in lst:

dic = {}

try:

drive = webdriver.Chrome()

drive.maximize_window()

drive.get('https://www.mi.com/shop/category/list')

time.sleep(0.5)

drive.find_element_by_link_text(f"{i}").click()

time.sleep(0.5)

drive.find_element_by_class_name('J_nav_comment').click()

time.sleep(0.5)

handles = drive.window_handles

drive.switch_to.window(handles[-1])

cur_url = drive.current_url

w = cur_url[-6:-11:-1] # id

w2 = w[::-1] # 反转

# 接口

url = f'https://api2.service.order.mi.com/user_comment/get_summary?show_all_tag=1&goods_id={w2}&v_pid=17972&support_start=0&support_len=10&add_start=0&add_len=10&profile_id=0&show_img=0&callback=__jp6'

headers = {'referer': 'https://www.mi.com/',

'accept': 'application/json, text/plain, */*',

'sec-ch-ua': '"Not?A_Brand";v="8", "Chromium";v="108", "Google Chrome";v="108"',

'sec-ch-ua-mobile': '?0',

'sec-ch-ua-platform': "Windows",

'sec-fetch-dest': 'script',

'sec-fetch-mode': 'no-cors',

'sec-fetch-site': 'same-site',

'user-agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/108.0.0.0 Safari/537.36'}

respost = requests.get(url, headers=headers)

data = respost.text

con_1 = data.split(',') # 将数据分割

a = con_1[37]

b = con_1[36]

c = con_1[43]

d = con_1[42]

w3 = a[17:] # 总评论数

w4 = b[16:] # 好评数

w5 = c[14:] # 满意度

w6 = d[13:]

dic['商品名称'] = f'{i}'

dic['id'] = w2

dic['总评论数'] = w3

dic['好评数'] = w4

dic['满意度'] = w5

lst4.append(dic)

print(f'{i}', w2, w3, w4, w5)

drive.quit() # 退出

except :

drive.quit()

# 循环完成之后在做保存

with open('小米商城.csv', 'a+', encoding='gbk', newline='') as f:

write = csv.DictWriter(f, fieldnames=['商品名称', '总评论数', '好评数', '满意度', 'id'])

write.writeheader()

write.writerows(lst4)