import requests

from lxml import etree

import asyncio

import aiohttp

import aiofiles

import os

def get_url(url):

resp=requests.get(url)

resp.encoding="utf-8"

resp_text=resp.text

result=[]

tree=etree.HTML(resp_text)

trss=tree.xpath('//div[@class="mulu"]/center/table')

for tab in trss:

chapter={}

trs=tab.xpath('./tr')

title=trs[0].xpath(".//text()")

titles="".join(title).strip()

hrefs_list=[]

for tr in trs[1:]:

href=tr.xpath('./td/a/@href')

hrefs_list.extend(href)

chapter['titles']=titles

chapter['hrefs_list']=hrefs_list

result.append(chapter)

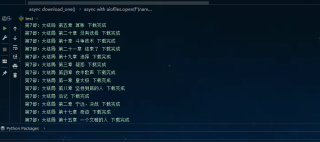

async def download_chapter(name,hrefs):

if not os.path.exists(name):

os.makedirs(name)

tasks=[]

for href in hrefs:

t=asyncio.create_task(download_one(name,hrefs))

tasks.append(t)

await asyncio.wait(tasks)

async def download_one(name,href):

async with aiohttp.ClientSession()as session:

async with session.get(href)as resp:

page_source =await resp.text(encoding='utf-8')

tree=etree.HTML(page_source)

title_name = tree.xpath('/html/body/div[3]/h1/text()')[0].strip()

content="\n".join(tree.xpath('/html/body/div[3]/div[2]/p//text()'))

async with aiofiles.open(f"{name}/{title_name}.txt",mode="w",encoding="utf-8")as f:

await f.write(content)

print(title_name,"下载完成")

def main():

url ="https://www.mingchaonaxieshier.com/%22

chapters=get_url(url)

for chapter in chapters:

titles=chapter['titles']

hrefs_list=chapter['hrefs_list']

asyncio.run(download_chapter(titles,hrefs_list))

if name == 'main':

main()