更改了,结构,现在是欧克的了

import torch.nn as nn

num_classes = 2 # 类别数

batch_size = 128 # 批次大小

class CNN(nn.Module):

def __init__(self):

super().__init__()

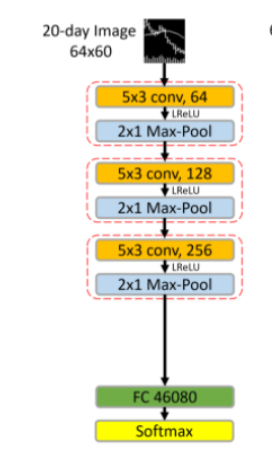

self.layer1 = nn.Sequential(

nn.Conv2d(1, 64, kernel_size=(5, 3), stride=(3, 1), dilation=(2, 1), padding=(12, 1)),

nn.BatchNorm2d(64),

nn.LeakyReLU(negative_slope=0.01, inplace=True),

nn.MaxPool2d((2, 1), stride=(2, 1)),

)

self.layer2 = nn.Sequential(

nn.Conv2d(64, 128, kernel_size=(5, 3),padding='same'),

nn.BatchNorm2d(128),

nn.LeakyReLU(negative_slope=0.01, inplace=True),

nn.MaxPool2d((2, 1), stride=(2, 1)),

)

self.layer3 = nn.Sequential(

nn.Conv2d(128, 256, kernel_size=(5, 3),padding='same'),

nn.BatchNorm2d(256),

nn.LeakyReLU(negative_slope=0.01, inplace=True),

nn.MaxPool2d((2, 1), stride=(2, 1)),

)

self.fc1 = nn.Sequential(

nn.Dropout(p=0.5),

nn.Linear(46080, 2),

)

self.softmax = nn.Softmax(dim=1)

for m in self.modules():

if isinstance(m, nn.Conv2d):

nn.init.xavier_uniform_(m.weight)

elif isinstance(m, nn.Linear):

nn.init.xavier_uniform_(m.weight)

def forward(self, x):

x = self.layer1(x)

x = self.layer2(x)

x = self.layer3(x)

x = x.view(batch_size, -1)

x = self.fc1(x)

x = self.softmax(x)

return x