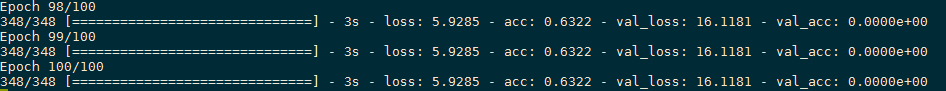

使用的是vgg16的finetune网络,网络权重从keras中导入的,最上层有三层的一个小训练器的权重是由训练习得的。训练集大约300个样本,验证集大约80个样本,但程序运行后,第一二个epoch之间loss、acc还有变化,之后就不再变化,而且验证集的准确度一直接近于零。。想请有关卷积神经网络和机器学习方面的大神帮忙看一下是哪里出了问题

import keras

from keras.models import Sequential

from keras.layers import Dense,Dropout,Activation,Flatten

from keras.layers import GlobalAveragePooling2D

import numpy as np

from keras.optimizers import RMSprop

from keras.utils import np_utils

import matplotlib.pyplot as plt

from keras import regularizers

from keras.applications.vgg16 import VGG16

from keras import optimizers

from keras.layers.core import Lambda

from keras import backend as K

from keras.models import Model

#写一个LossHistory类(回调函数),保存loss和acc,在keras下画图

class LossHistory(keras.callbacks.Callback):

def on_train_begin(self, logs={}):#在每个batch的开始处(on_batch_begin):logs包含size,即当前batch的样本数

self.losses = {'batch':[], 'epoch':[]}

self.accuracy = {'batch':[], 'epoch':[]}

self.val_loss = {'batch':[], 'epoch':[]}

self.val_acc = {'batch':[], 'epoch':[]}

def on_batch_end(self, batch, logs={}):

self.losses['batch'].append(logs.get('loss'))

self.accuracy['batch'].append(logs.get('acc'))

self.val_loss['batch'].append(logs.get('val_loss'))

self.val_acc['batch'].append(logs.get('val_acc'))

def on_epoch_end(self, batch, logs={}):#每迭代完一次从log中取得数据

self.losses['epoch'].append(logs.get('loss'))

self.accuracy['epoch'].append(logs.get('acc'))

self.val_loss['epoch'].append(logs.get('val_loss'))

self.val_acc['epoch'].append(logs.get('val_acc'))

def loss_plot(self, loss_type):

iters = range(len(self.losses[loss_type])) #绘图的横坐标?

plt.figure() #建立一个空的画布

if loss_type == 'epoch':

plt.subplot(211)

plt.plot(iters,self.accuracy[loss_type],'r',label='train acc')

plt.plot(iters,self.val_acc[loss_type],'b',label='val acc') # val_acc用蓝色线表示

plt.grid(True)

plt.xlabel(loss_type)

plt.ylabel('accuracy')

plt.show()

plt.subplot(212)

plt.plot(iters, self.losses[loss_type], 'r', label='train loss') # val_acc 用蓝色线表示

plt.plot(iters, self.val_loss[loss_type], 'b', label='val loss') # val_loss 用黑色线表示

plt.xlabel(loss_type)

plt.ylabel('loss')

plt.legend(loc="upper right") #把多个axs的图例放在一张图上,loc表示位置

plt.show()

print(np.mean(self.val_acc[loss_type]))

print(np.std(self.val_acc[loss_type]))

seed = 7

np.random.seed(seed)

#训练网络的几个参数

batch_size=32

num_classes=2

epochs=100

weight_decay=0.0005

learn_rate=0.0001

#读入训练、测试数据,改变大小,显示基本信息

X_train=np.load(open('/image_BRATS_240_240_3_normal.npy',mode='rb'))

Y_train=np.load(open('/label_BRATS_240_240_3_normal.npy',mode='rb'))

Y_train = keras.utils.to_categorical(Y_train, 2)

#搭建神经网络

model_vgg16=VGG16(include_top=False,weights='imagenet',input_shape=(240,240,3),classes=2)

model_vgg16.layers.pop()

model=Sequential()

model.add(model_vgg16)

model.add(Flatten(input_shape=X_train.shape[1:]))

model.add(Dense(436,activation='relu')) #return x*10的向量

model.add(Dense(2,activation='softmax'))

#model(inputs=model_vgg16.input,outputs=predictions)

for layer in model_vgg16.layers[:13]:

layer.trainable=False

model_vgg16.summary()

model.compile(optimizer=RMSprop(lr=learn_rate,decay=weight_decay),

loss='categorical_crossentropy',

metrics=['accuracy'])

model.summary()

history=LossHistory()

model.fit(X_train,Y_train,

batch_size=batch_size,epochs=epochs,

verbose=1,

shuffle=True,

validation_split=0.2,

callbacks=[history])

#模型评估

history.loss_plot('epoch')

比如: