LSTM建模为何训练值为一条直线

import pandas as pd

import tensorflow as tf

import numpy as np

import matplotlib.pyplot as plt

df=pd.read_excel('分钟数据集.xlsx',index_col='日期',engine='openpyxl')

#归一化:

data=df

data_max = data.max()

data_min = data.min()

data = (data-data_min)/(data_max-data_min)

dataset_st = np.array(data)

def data_set(dataset, lookback): # 创建时间序列数据样本

dataX, dataY = [], []

for i in range(len(dataset) - lookback):

a = dataset[i:(i + lookback)]

dataX.append(a[1:])

dataY.append(dataset[i + lookback][0])

return np.array(dataX), np.array(dataY)

划分训练集和测试集

train_size = int(len(dataset_st) * 0.7)

test_size = len(dataset_st) - train_size

train, test = dataset_st[0:train_size], dataset_st[train_size:len(dataset_st)]

print(len(train))

print(len(test))

根据划分的训练集测试集生成需要的时间序列样本数据

lookback = 240

trainX, trainY = data_set(train, lookback)

testX, testY = data_set(test, lookback)

#LSTM回归预测 建模

#步骤 1 初始化神经网络:

model=tf.keras.Sequential([tf.keras.layers.LSTM(60,input_shape=(trainX.shape[1],

trainX.shape[2]),return_sequences=True),

tf.keras.layers.Dropout(0.2),

tf.keras.layers.LSTM(120),

tf.keras.layers.Dropout(0.2),

tf.keras.layers.Dense(30,activation='sigmoid'),

tf.keras.layers.Dense(1)

])

#步骤 2 定义学习率更新规则:

model.compile(

optimizer=tf.keras.optimizers.Adam(0.0001), #学习率

loss='mean_squared_error', # 损失函数用交叉熵

metrics=["mse"]

)

#步骤 3 构建模型训练:

history = model.fit(trainX, trainY,

batch_size=40, epochs=5, validation_data=(testX, testY),

validation_freq=1)

#步骤 4 构建绘图函数:

def plot_learning_curves(history):

pd.DataFrame(history.history).plot(figsize=(10,6))

plt.grid(True)

plt.title('训练情况')

plt.savefig('./训练情况.jpg')

plt.show()

#模型验证:

test_preds=model.predict(testX,verbose=1)

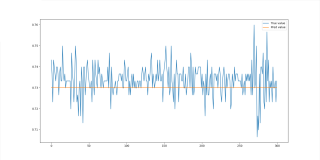

plt.figure(figsize=(16,8))

plt.plot(testY[:300], label="True value")

plt.plot(test_preds[:300], label="Pred value")

plt.legend(loc='best')

plt.show()

运行结果:

Epoch 1/5

3073/3073- 503s 163ms/step - loss: 0.0175 - mse: 0.0175 - val_loss: 7.0646e-05 - val_mse: 7.0646e-05

Epoch 2/5

3073/3073 - 493s 161ms/step - loss: 1.6900e-04 - mse: 1.6900e-04 - val_loss: 5.8696e-05 - val_mse: 5.8696e-05

Epoch 3/5

3073/3073 - 540s 176ms/step - loss: 1.2051e-04 - mse: 1.2051e-04 - val_loss: 5.8187e-05 - val_mse: 5.8187e-05

Epoch 4/5

3073/3073- 479s 156ms/step - loss: 1.0361e-04 - mse: 1.0361e-04 - val_loss: 5.7863e-05 - val_mse: 5.7863e-05

Epoch 5/5

3073/3073 - 512s 167ms/step - loss: 9.4500e-05 - mse: 9.4500e-05 - val_loss: 6.6830e-05 - val_mse: 6.6830e-05

1642/1642 - 69s 42ms/step